Top 10 Cloud Cost optimization Strategies for Investor-Ready Startups in 2026

In the founder's journey from idea to a high-valuation technical asset, cloud spend is not just an expense—it's a direct reflection of architectural efficiency and scalability. Generic cost-cutting advice misses the point. For a startup founder building an investor-ready MVP, every dollar saved on infrastructure is a dollar re-invested into product, growth, or extending runway.

This isn't about being cheap; it's about being strategic. The difference between a prototype and a production-grade asset lies in how it's engineered for both performance and financial sustainability. At Buttercloud, we guide founders to build 'technical moats,' where cost efficiency is an embedded feature, not an afterthought. To truly master your cloud spending and ensure your engineering valuation reflects smart financial management, understanding and implementing proven cloud cost optimization best practices is crucial.

This listicle moves beyond the obvious, presenting 10 cloud cost optimization strategies tailored for the high-stakes environment of startups seeking to impress investors and build for the long term. These are the levers we use as Mentor-Architects to ensure your MVP passes rigorous VC technical due diligence and stands as a testament to capital efficiency. You'll learn specific, actionable methods for right-sizing, managing capacity, optimizing storage, and negotiating with providers. We'll cover quick wins and long-term architectural patterns, all focused on turning your infrastructure from a liability into an investable asset.

1. Right-Sizing Compute Instances

Right-sizing is a foundational cloud cost optimization strategy that directly addresses over-provisioning. It involves analyzing the actual performance metrics of your compute instances, such as CPU and memory utilization, and then selecting an instance type that matches your workload's real needs. For a startup building its MVP, this is often the fastest route to significant savings, frequently reducing compute spend by 20-30% without affecting performance.

The common pitfall is to provision for a theoretical peak load that may never materialize, leaving expensive resources idle. By adopting a data-driven approach, you ensure your infrastructure is efficient, cost-effective, and aligned with your current user traffic—building a lean technical asset that passes investor scrutiny.

Actionable Implementation

To implement right-sizing effectively, start by gathering data. Use native tools like AWS Compute Optimizer, Azure Advisor, or Google Cloud's recommendations engine. These services analyze historical utilization and provide specific instance-change recommendations.

For a startup with evolving product-market fit, a monthly review cycle is crucial. As your traffic patterns stabilize post-launch, what was once a necessary c5.xlarge instance might be perfectly served by a c5.large, cutting costs immediately.

A common scenario we see is a Series A company discovering that 40% of its compute fleet is over-provisioned after its first formal utilization audit. Implementing right-sizing not only cuts direct costs but also demonstrates operational maturity to investors.

Strategic Tips for Founders:

Follow the 80/20 Rule: Begin by identifying the top 20% of your instances that account for 80% of your compute costs. Focus your initial efforts there for maximum impact.

Monitor Before, During, and After: Before making a change, establish a baseline with CloudWatch or Azure Monitor dashboards. After downgrading, monitor closely to ensure performance remains stable.

Set Utilization Alerts: To prevent under-sizing, configure alerts that notify you of sudden utilization spikes. This allows you to react quickly and scale up if needed, protecting the user experience.

2. Reserved Instances and Savings Plans

Committing to usage is one of the most powerful cloud cost optimization strategies available, offering deep discounts in exchange for a predictable spend. Reserved Instances (RIs) and their more flexible successors, Savings Plans, allow you to purchase compute capacity for a 1 or 3-year term at a fraction of the on-demand price. For a startup with a production-grade MVP that has established a predictable baseline workload, this is the single largest lever for cost reduction, often cutting compute bills by 30-72%.

While on-demand pricing offers maximum flexibility, it’s also the most expensive. By forecasting your minimum required compute power, you can secure significant savings. Savings Plans, in particular, provide flexibility across instance families, sizes, and even regions, making them a perfect fit for a growing technical asset that needs to adapt without forfeiting discounts.

Actionable Implementation

Your first step is to analyze your historical usage to establish a reliable baseline. Use native tools like the AWS Cost Explorer Purchase Recommendations feature, which analyzes your past on-demand spending and suggests an optimal commitment level. It will model potential savings for different plans (e.g., All Upfront, Partial Upfront, No Upfront) and term lengths.

For a startup that has just found product-market fit, a 1-year Savings Plan is the ideal starting point. It balances a significant discount with the flexibility needed to evolve your architecture. As your platform matures and certain workloads, like data processing or machine learning inference, become highly predictable, you can lock in even deeper savings with 3-year commitments for those specific components.

As Fractional CTOs, we guided a Series A startup that was paying $15,000 monthly for on-demand compute. After analyzing their stable baseline, we implemented a 1-year Savings Plan that reduced their bill to just $4,500 per month, directly boosting their cash runway and demonstrating financial discipline to their board.

Strategic Tips for Founders:

Commit to Your Baseline, Not Your Peak: Analyze your usage data and commit to covering only about 70-80% of your lowest consistent hourly usage. Leave the remaining 20-30% as on-demand to handle unexpected traffic spikes or scaling events.

Start with 1-Year, No Upfront Plans: For your first commitment, choose a 1-year term with no upfront payment. This minimizes your cash-flow impact and risk while still delivering substantial savings. You can graduate to longer terms or partial/full upfront payments later for greater discounts.

Review and Adjust Quarterly: Your infrastructure needs will change. Set a recurring calendar event to review your Savings Plan coverage quarterly. As your baseline usage grows, you can purchase additional, smaller plans to stack on top of your existing commitment, ensuring you don’t leave savings on the table.

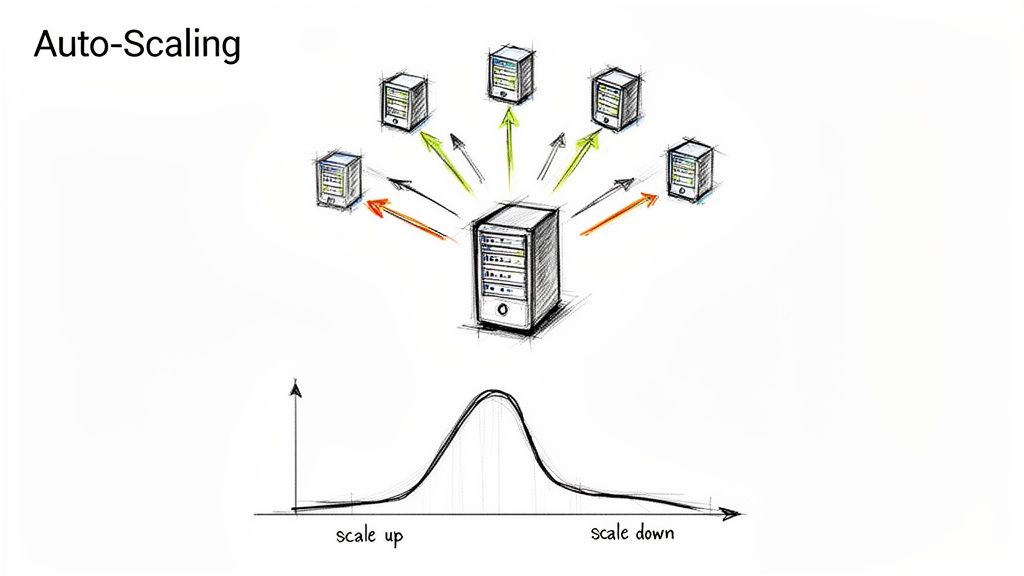

3. Auto-Scaling and Demand-Based Capacity Management

Auto-scaling is a core discipline in cloud-native engineering that matches compute capacity to real-time demand. Instead of paying for a fixed fleet of servers 24/7, auto-scaling automatically adds or removes instances based on metrics like CPU utilization or request count. For a startup with variable traffic, such as a fintech app with market-hour peaks or a consumer platform with viral potential, this is non-negotiable for building a capital-efficient technical asset.

Properly configured auto-scaling directly translates to cost savings, often reducing compute spend by 30-50%, while simultaneously improving reliability during unpredictable traffic spikes. It ensures your product can handle a 10x surge from a product launch or press feature without manual intervention, proving to investors that your architecture is built for growth, not just for today's load.

Actionable Implementation

To implement this cloud cost optimization strategy, start with your provider's native tools: AWS Auto Scaling groups, Google Cloud Autoscaler, or the Kubernetes Horizontal Pod Autoscaler (HPA). Begin by defining policies based on standard metrics like average CPU or memory usage.

For example, an AI-powered startup can use scheduled scaling to reduce its GPU instance count by 70% during off-hours when model training jobs are not running. As you gather data, you can graduate to more advanced policies based on custom metrics, like the depth of a processing queue, for more intelligent and responsive scaling.

We guided a B2B SaaS platform whose costs were spiraling due to over-provisioning for peak loads. By implementing target-tracking auto-scaling policies, we stabilized their daily compute spend from a volatile $800-$3,000 range to a predictable $1,200, demonstrating operational control and improving their margin.

Strategic Tips for Founders:

Scale Up Fast, Scale Down Slow: Configure your policies to add capacity aggressively to protect the user experience during a spike. Be more conservative when removing capacity to avoid oscillations and ensure stability.

Optimize Startup Time: Auto-scaling is only effective if new instances can serve traffic quickly. Monitor your scale-up latency and focus engineering efforts on reducing application boot times.

Use Custom Metrics: Don't limit yourself to CPU. For an ML startup, scaling based on the inference queue length is far more effective. For a data pipeline, it might be SQS queue depth. This aligns scaling directly with business value.

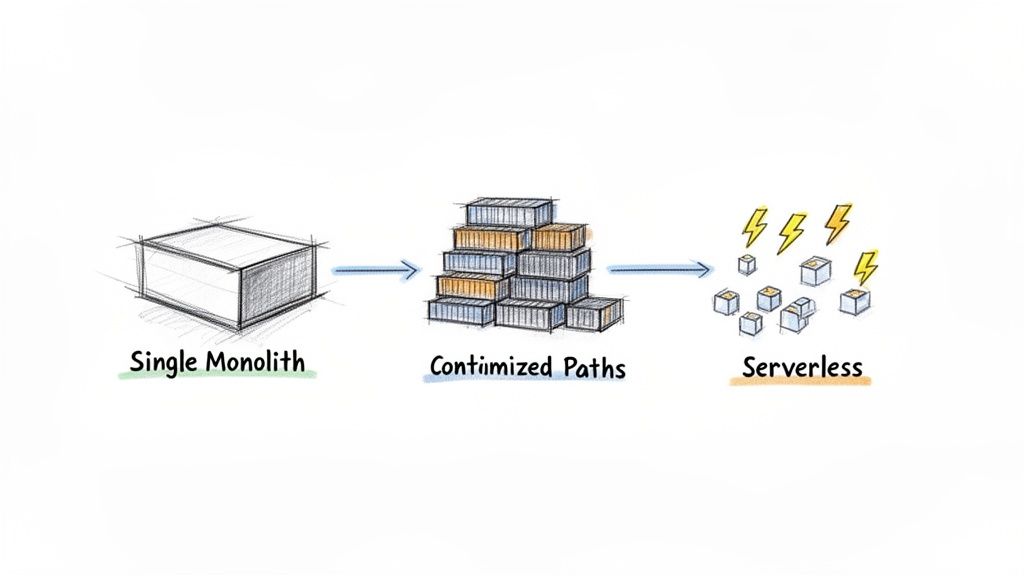

4. Container Optimization and Serverless Alternatives

Transitioning workloads from traditional virtual machines to more modern architectures is a primary cloud cost optimization strategy. Moving to containers (Docker/Kubernetes) or serverless platforms (Lambda, Cloud Functions) allows you to pay only for the compute you actually consume, eliminating the cost of idle VM resources. This approach can reduce compute spend by 40-60%, especially for event-driven tasks, background jobs, and APIs with variable traffic, making it a critical choice for building a lean, scalable product.

This architectural shift moves your infrastructure from paying for uptime to paying for execution. For startups, this means your cloud bill directly reflects user activity, not just provisioned capacity. It's about building a technical asset that's both efficient and responsive, aligning your operational costs with your growth trajectory. These are key technology choices for startup success, demonstrating advanced, audit-ready engineering practices to investors.

Actionable Implementation

Start by identifying workloads that don't require a 24/7 server. Batch processing, image resizing, and data pipeline tasks are ideal candidates. For containerization, begin with a simple Docker image for one of your services and deploy it using a managed service like AWS Fargate or Google Cloud Run to avoid Kubernetes' initial operational complexity.

For serverless, pick a single, high-traffic API endpoint and migrate its logic to an AWS Lambda or Azure Function. Tools like the AWS Serverless Application Model (SAM) or the Serverless Framework can simplify deployment and management. The goal is to prove the model with a small, measurable win before a broader rollout.

A data processing startup we advised reduced its monthly compute bill from $8,000 to just $1,200. The key was moving its batch jobs from a fleet of scheduled EC2 instances to a solution using AWS Batch with Spot instances, demonstrating the immense savings available.

Strategic Tips for Founders:

Slim Your Containers: Use lightweight base images like Alpine or Google's "distroless" images. This can shrink container sizes by over 70%, leading to faster deployments and reduced storage costs.

Use Spot Instances with Kubernetes: For fault-tolerant containerized workloads, integrate Spot or Preemptible instances into your Kubernetes cluster. This can provide discounts of up to 80% compared to on-demand pricing for your worker nodes.

Monitor Serverless Performance: For serverless functions, keep a close watch on cold starts. Use "provisioned concurrency" for latency-sensitive APIs to ensure a warm environment, but apply it selectively to manage costs.

Adopt Hybrid Architectures: You don't have to choose one or the other. Run your core, latency-sensitive API on a warm Kubernetes cluster while offloading spiky, non-critical background tasks to serverless functions for maximum cost-efficiency.

5. Storage Tiering and Data Lifecycle Management

Storage costs often grow insidiously, accumulating quietly until they become a significant line item on your cloud bill. Implementing intelligent data tiering is a powerful cloud cost optimization strategy that directly combats this uncontrolled growth. The process involves automatically moving infrequently accessed data to progressively cheaper storage classes and setting clear policies for data deletion, which can reduce storage spend by 50-80%.

For a startup, this is a critical discipline. Every byte of data, from user-generated content to system logs, has a lifecycle. Failing to manage it means paying premium prices for data that provides no immediate value, such as old customer backups or analytics snapshots. By establishing automated lifecycle policies, you build a lean data architecture that minimizes waste.

Actionable Implementation

Begin by auditing your current storage usage. Use native tools like AWS S3 Storage Class Analysis or open-source solutions like CloudCustodian to identify which data is rarely accessed. These tools provide the necessary insights to create effective lifecycle policies that move data from a standard, active tier to an infrequent access tier and eventually to a cold archive.

For example, a SaaS platform might discover it's storing 300GB of old customer backups in expensive Standard storage. By moving 90% of that data to a service like Amazon S3 Glacier, it could realize immediate and significant monthly savings. Likewise, an analytics startup can enable S3 Intelligent-Tiering to automate this process based on access patterns, turning a reactive cost problem into a proactive, automated solution.

We often see healthcare startups automate their data lifecycle to remain compliant while optimizing costs. By creating a policy to archive DICOM images to a cold storage tier after seven years, they can reduce their active storage footprint by over 60%, demonstrating operational maturity and fiscal responsibility.

Strategic Tips for Founders:

Implement Tiering Gradually: Don't move everything to an archive at once. Create a phased policy, such as moving data to Infrequent Access after 30 days, Glacier after 90, and Deep Archive after 180.

Manage Logs Intelligently: Delete non-critical logs after their useful retention period. Only use features like S3 Object Lock if strict compliance mandates it, as it prevents deletion and can increase costs.

Use Cost Allocation Tags: Tag storage buckets by data type or application (e.g.,

data:logs,data:user-backups). This allows you to track storage ROI and attribute costs correctly across your business.

6. Network and Data Transfer Optimization

Data transfer costs are frequently overlooked yet can constitute a significant portion of a cloud bill, often 15-25% for data-intensive startups. This optimization strategy focuses on minimizing the cost of moving data out of the cloud (egress). Implementing a Content Delivery Network (CDN) and co-locating resources are key tactics that can cut network spending by 30-60% while simultaneously boosting application performance.

For a founder building a product with significant media or API traffic, this is not a future optimization; it's a foundational architectural choice. Unmanaged data transfer fees scale directly with user growth, turning your success into a financial liability. Addressing this early builds a more efficient, profitable technical asset.

Actionable Implementation

Start by integrating a CDN like AWS CloudFront or Cloudflare into your architecture from day one. These services cache your content at edge locations globally, serving users from a nearby server instead of your origin. This drastically reduces expensive egress traffic from your primary cloud region.

Also, audit internal traffic patterns. Use VPC Endpoints for internal communication between your services and AWS services like S3 or DynamoDB. This keeps traffic on the private AWS network, bypassing costly NAT Gateways and their associated data processing fees.

A media startup we worked with saw their egress bill drop from $8,000/month to just $1,800/month after correctly implementing CloudFront with Origin Shield. The change not only saved them over $74,000 annually but also improved global content load times, which was critical for user retention.

Strategic Tips for Founders:

Implement a CDN Before Launch: Make services like CloudFront or Cloudflare part of your core MVP architecture. It's far easier to build with content delivery in mind than to retrofit it later.

Enable Compression: Activate Gzip or Brotli compression on your web server or CDN. This simple step can reduce the size of text-based files by over 80%, lowering transfer costs and speeding up your site.

Monitor Your Cache Hit Ratio: Aim for a cache hit ratio of 90% or higher for static assets. Use CDN monitoring tools to track this metric; a low ratio indicates a configuration problem that is costing you money on every user request.

Use VPC Endpoints: If your application instances need to access AWS services like S3, use VPC endpoints. This simple configuration change eliminates NAT Gateway data processing charges, a common source of hidden costs.

7. Database and Caching Optimization

For most data-driven startups, the database represents a significant portion of infrastructure spend, often accounting for 20-30% of the total cloud bill. Optimizing your data layer is not just about cutting costs; it's about building a responsive, scalable product. This strategy involves a multi-pronged approach: selecting the right database model, implementing intelligent caching, and continuously refining queries and instance sizes.

A poorly configured database creates a performance bottleneck that gets exponentially more expensive as user traffic grows. By strategically caching frequently accessed data with services like Redis or Memcached, you can drastically reduce the load on your primary database. This often allows you to downsize your database instance, delivering a faster user experience for a fraction of the cost.

Actionable Implementation

Begin by analyzing your application's data access patterns. Identify which data is read frequently but updated infrequently, such as user profiles, product catalogs, or configuration settings. This is the prime candidate for caching. Use a service like Amazon ElastiCache for Redis or Azure Cache for Redis to store this data in-memory, delivering responses in milliseconds.

For example, a SaaS platform can implement a read-through caching strategy using ElastiCache in front of its RDS database. This setup can handle a 10x increase in concurrent users on the same database hardware, deferring a costly upgrade. For write-heavy analytics workloads, consider Amazon DynamoDB on-demand capacity, which automatically scales to match traffic and eliminates the cost of provisioned-but-idle resources.

We guided an e-commerce MVP that reduced its primary database from a

db.r5.largeto adb.t3.mediumsolely through aggressive caching of its product catalog. This single change saved them over $800 per month and improved page load times, directly impacting user conversion.

Strategic Tips for Founders:

Cache Aggressively, Measure Relentlessly: Start by caching user session data, configurations, and public content. Monitor your cache hit rate; a high hit rate (over 90%) is a strong indicator of effective cost reduction.

Optimize Queries First: Before resizing your database, use your cloud provider's tools to monitor for slow queries. Simple query optimization or adding an index can often yield a 10-50% performance gain without any infrastructure changes.

Use Aurora for Relational Workloads: If using MySQL or PostgreSQL, consider migrating to Amazon Aurora. Its storage architecture is more efficient and its auto-scaling capabilities are superior to standard RDS, providing better performance-to-cost value.

Pool Connections in Serverless Architectures: When using AWS Lambda or other serverless functions, implement RDS Proxy. It manages database connection pools, preventing your database from being overwhelmed by a high volume of concurrent, short-lived connections.

8. Monitoring, Tagging, and Cost Allocation

You cannot optimize what you cannot measure. Implementing proper cloud cost visibility is the foundational step for all other cloud cost optimization strategies. Through disciplined resource tagging, cost allocation, and detailed monitoring, startups can gain a clear picture of where every dollar is spent, moving from reactive fire-fighting to data-driven decision-making. Founders without this visibility often waste 20-30% of their cloud spend before even realizing there's a problem.

This strategy is about building financial accountability into your technical infrastructure. For a founder seeking investment, it demonstrates operational control and fiscal maturity. By tracking costs per feature, team, or environment, you create a system where engineers are empowered to build efficiently, turning your cloud bill from an opaque expense into a manageable, strategic asset.

Actionable Implementation

Begin by defining a standardized tagging schema before you even write the first line of code. Use tools like AWS Cost Explorer, Azure Cost Management, or the Google Cloud billing dashboard to create and enforce these tags. These platforms allow you to filter and group costs, providing the clarity needed to identify waste and allocate budgets effectively.

For instance, a Series A startup can use this approach to discover thousands per month in unattached storage volumes or unused IP addresses. By tagging resources with "owner," "project," and "environment" keys, they can quickly trace these orphaned assets back to their source and decommission them, unlocking immediate savings.

We recently worked with a client who caught a runaway database query that was consuming $2,000 per day, completely unnoticed. It was only after implementing AWS Cost Anomaly Detection, a core part of a robust monitoring strategy, that the spike was flagged and resolved. This isn't just optimization; it's essential risk management.

Strategic Tips for Founders:

Standardize Your Schema: Before launch, establish a mandatory tagging policy for key identifiers like

project,environment,cost-center, andowner. This creates an audit-ready trail from day one.Enable Anomaly Detection: Activate services like AWS Cost Anomaly Detection or its equivalent in Azure or GCP. These tools automatically alert you to unusual spending patterns, preventing small issues from becoming major financial drains.

Share the Responsibility: Schedule quarterly cost reviews with your engineering teams. When developers see the direct financial impact of their code, they become partners in optimization, fostering a culture of cost-conscious engineering.

9. Spot Instances and Preemptible Capacity for Non-Critical Workloads

For fault-tolerant workloads, using Spot Instances (AWS) or Preemptible VMs (GCP) is the most aggressive and impactful of all cloud cost optimization strategies. These instances represent spare compute capacity, offered at discounts of 70-90% compared to on-demand pricing. The trade-off is that the cloud provider can reclaim this capacity with just a two-minute warning, making them unsuitable for mission-critical, stateful services.

For a startup, this is a direct lever to pull to slash operational expenses. By identifying and migrating non-critical workloads like batch jobs, CI/CD pipelines, or data analytics processing, you can redirect significant capital back into product development or marketing. Savvy engineering teams often run 40-60% of their entire compute infrastructure on Spot, demonstrating high operational maturity.

Actionable Implementation

The key to successfully using Spot is designing for interruption. Instead of running a single Spot instance, use provider tools like AWS Spot Fleet or Google Cloud's managed instance groups. These services automatically request capacity across different instance types and availability zones to find the lowest price and highest availability, maintaining your target capacity even when interruptions occur.

For example, a data science startup could run 80% of its model training jobs on a diversified Spot Fleet. This simple architectural choice could reduce a monthly compute bill from $12,000 to just $2,400, freeing up nearly $10k in monthly runway.

We often guide founders to run their entire testing and CI/CD infrastructure on Spot. It's a perfect fit, as these jobs are stateless and transient. This typically cuts build and test costs by 85%, turning a necessary operational expense into a negligible one.

Strategic Tips for Founders:

Start with Low-Hanging Fruit: Begin by migrating your most fault-tolerant workloads first. CI/CD test runners, image and video transcoding jobs, and periodic data processing are ideal candidates.

Implement Graceful Shutdown: Engineer your applications to handle the interruption signal (SIGTERM). This means saving state, draining connections, and exiting cleanly within the two-minute window to avoid data corruption.

Diversify for Reliability: Don't rely on a single instance type in one availability zone. Create a Spot Fleet request that includes 5-10 different instance types to dramatically reduce the chance of losing all your capacity at once.

Monitor Interruption Rates: Use CloudWatch or your provider's equivalent to track your Spot interruption frequency. If your rate climbs above 5%, it's a signal to diversify your instance pool further or slightly increase your on-demand footprint for stability.

10. Cloud Provider Cost Commitment and Discount Negotiation

Once you move beyond standard Reserved Instances and Savings Plans, the next level of cloud cost optimization involves direct negotiation with your cloud provider. For high-growth, venture-backed startups, this strategy unlocks significant savings that aren't available on a public pricing page. It transforms the vendor relationship into a partnership, where providers like AWS, Google Cloud, and Azure offer custom discounts in exchange for committed, long-term spend.

This approach is about recognizing your value as a future enterprise customer. As your monthly spend grows, you gain negotiating power. Securing a deal like an AWS Enterprise Discount Program (EDP) or a custom Google Cloud agreement can reduce costs by 10-30% beyond standard commitments, often accompanied by valuable startup credits and co-marketing support, making your technical asset more capital-efficient.

Actionable Implementation

Start the conversation when your cloud spend consistently hits the $5k-$10k monthly range. Prepare a clear document outlining your growth trajectory, product roadmap, and projected future cloud consumption. This isn't just a cost-cutting exercise; it’s a strategic move to formalize your partnership with the cloud vendor.

Engage with your provider’s dedicated startup or enterprise account manager. If you’re part of an accelerator or backed by a VC firm, ask about their cloud partner contacts. These firms often have established relationships and pre-negotiated perks that their portfolio companies can access. Professional guidance from a cloud migration consulting service can also help prepare the technical and financial justification needed for these high-stakes negotiations.

A Series B startup we advised successfully negotiated a 2-year AWS EDP by demonstrating a clear path to tripling their spend post-fundraise. They secured a 22% discount on all on-demand usage and $100k in credits, directly improving their runway and gross margins.

Strategic Tips for Founders:

Timing is Everything: Initiate these discussions when you are preparing for a major funding round. A new injection of capital signals a commitment to growth and makes your projected spend more credible.

Maintain Leverage: Even if you favor one provider, architecting for multi-cloud readiness gives you a powerful negotiating tool. The ability to shift workloads prevents vendor lock-in and keeps pricing competitive.

Document Everything: Ensure all discounts, terms, support levels, and potential exit clauses are clearly stated in a written agreement. Verbal commitments are not enough; a formal contract protects your interests.

10-Point Cloud Cost Optimization Comparison

Strategy | Implementation complexity | Resource requirements | Expected outcomes | Ideal use cases | Key advantages |

|---|---|---|---|---|---|

Right-Sizing Compute Instances | Low–Medium — monitoring and staged changes (1–3 months baseline) | Monitoring tools, historical metrics, minimal engineering time | ~20–30% cost reduction; better resource visibility | MVPs with over-provisioned VMs across clouds | Quick ROI, no architectural changes, provider-agnostic |

Reserved Instances & Savings Plans | Medium — forecasting and purchase decisions | Billing analysis, budgeting/commitment, cost-recommender tools | 30–72% discounts; predictable monthly costs | Stable, predictable baseline workloads in production | Largest discounts for steady usage; budgeting certainty |

Auto-Scaling & Demand-Based Capacity | Medium–High — policy tuning and testing | Autoscaling configs, monitoring, health checks, SRE effort | 30–50% cost reduction; handles spikes with improved availability | Variable-traffic apps, time-zone distributed users | Eliminates over-provisioning; automatic spike handling |

Container Optimization & Serverless Alternatives | High — refactoring, orchestration, platform changes | Container tooling, Kubernetes/serverless platforms, SRE skills | 40–60% compute reduction; reduced idle cost; faster deployments | Event-driven APIs, background jobs, microservices | Pay-for-use billing, better utilization, faster scale-up |

Storage Tiering & Data Lifecycle Management | Low–Medium — policy setup and audits | Lifecycle policies, storage analytics, occasional retrieval planning | 50–80% storage cost reduction; improved data hygiene/compliance | Backups, archival data, rapidly growing storage pools | Hands-off savings, transparent to apps, compliance-friendly |

Network & Data Transfer Optimization | Medium — CDN and network architecture work | CDN services, VPC design, compression/caching layers | 30–60% network cost reduction; lower latency | Media-heavy apps, distributed users, API-heavy platforms | Reduces egress, improves performance, adds security features |

Database & Caching Optimization | Medium–High — query tuning to architectural changes | Caching (Redis), DB tuning tools, schema and pooling work | DB load cut 70%+; costs reduced 40–60%; faster responses | Data-driven, read-heavy, high-concurrency applications | Big throughput gains, fewer expensive DB scale-ups |

Monitoring, Tagging & Cost Allocation | Medium — governance and tooling adoption | Tagging standards, dashboards, FinOps tools, cultural changes | Reveals 20–30% hidden waste; enables data-driven optimizations | All startups before major optimization work | Foundation for all cost work; accountability and forecasting |

Spot Instances & Preemptible Capacity | Medium–High — interruption handling and diversification | Spot fleets, orchestration, fault-tolerant app design | 70–90% discounts for tolerant workloads; large capacity | Batch jobs, CI/CD, analytics, fault-tolerant services | Extreme discounts; low-cost large-scale compute capacity |

Cloud Provider Cost Commitment & Negotiation | High — negotiation, legal and spend commitments | Sales engagement, spend forecasts, legal/financial reviews | Additional 10–30% off + startup credits and dedicated support | High-spend or venture-backed startups approaching scale | Extra discounts, dedicated TAMs, co-marketing and credits |

From Cost Center to Strategic Asset: Your Next Move

You've explored ten distinct cloud cost optimization strategies, from right-sizing compute instances to negotiating long-term provider discounts. The temptation might be to view this as a checklist: complete each item and consider the job done. But this perspective misses the fundamental point. Effective cloud cost management is not a one-time audit; it's a continuous discipline woven into the fabric of your engineering culture.

For a startup founder, particularly one building an investor-ready MVP, every technical decision is a business decision. The strategies outlined in this article, like implementing auto-scaling, containerizing workloads, and establishing robust tagging policies, are more than just operational efficiencies. They are signals to investors that you are building a mature, scalable, and high-valuation business. An infrastructure that hemorrhages cash is a red flag during due diligence. In contrast, one built on the principles of financial prudence and technical excellence becomes a core component of your company's "technical moat."

Shifting from Reactive Fixes to Proactive Architecture

The most common mistake is treating cost optimization as a reactive, after-the-fact cleanup project. This approach is always more expensive and disruptive. The real value is realized when these principles are integrated from day one.

Consider the journey of your MVP:

Initial Build: Choosing serverless functions over provisioned servers for event-driven tasks isn't just about code; it's a decision that bakes in pay-per-use efficiency from the start.

Pre-Launch: Implementing Reserved Instances or Savings Plans for your core, predictable workloads demonstrates foresight, turning a variable expense into a predictable, discounted operational cost.

Post-Launch & Scaling: Having auto-scaling groups and a data lifecycle policy already in place means your infrastructure can handle a sudden influx of users without manual intervention or runaway bills.

This proactive stance transforms your cloud spend from a liability into a strategic asset. You are no longer just "renting servers"; you are architecting a system that directly contributes to your gross margins and enterprise valuation. Mastering these cloud cost optimization strategies is essential for building a resilient, audit-ready product. To truly transform your cloud spending into a strategic asset, refer to a comprehensive playbook for optimizing cloud computing.

Your Actionable Path Forward

Moving from theory to practice is the critical next step. Don't try to boil the ocean. Instead, prioritize based on impact and effort. Start with the "quick wins" like right-sizing and spot instances for non-critical tasks to build momentum and free up capital. Then, graduate to more structural changes, such as adopting a FinOps culture and refining your monitoring and governance frameworks.

The goal isn't just to spend less; it's to spend smarter. Every dollar saved on inefficient infrastructure is a dollar you can reinvest into product development, marketing, or hiring your first key engineers. This is how you build a lean, powerful organization that wins.

Ultimately, the mastery of these strategies marks the difference between building a fragile prototype and engineering an investable asset. By embedding financial intelligence into your technical roadmap, you ensure that as your company grows, its efficiency and profitability scale right alongside it. You're not just managing costs; you're building a foundation for long-term, sustainable success.

Are you ready to build an investor-ready product with an infrastructure designed for value, not waste? At Buttercloud, we act as your boutique engineering partner, providing the fractional CTO leadership to ensure your technical architecture is a core asset. Let's engineer your vision into a scalable, audit-ready product together: Buttercloud.