Chatbots Development Services: Build Investor-Ready AI

You’re probably in one of two situations right now.

Your team is drowning in repetitive questions, or your funnel is leaking leads because prospects want answers before they’ll book a demo, start a trial, or buy. Someone has already suggested “adding a chatbot” as the fix. That suggestion sounds simple. It isn’t.

A chatbot is rarely just a chatbot. For a startup, it’s an architectural decision. You can commission a thin interface that answers canned questions and breaks the moment your product, pricing, or workflow changes. Or you can build a system that becomes part of your company’s technical moat, one that captures proprietary interaction data, integrates with core systems, and survives investor technical diligence.

Most founders choose badly because most vendors frame chatbots development services as a support feature. That’s the wrong frame. The key decision is whether your chatbot will become disposable software or a business asset.

Beyond Automation An Introduction for Founders

The market has already moved. Chatbot adoption surged 4.7x across businesses from 2020 to 2025, and 95% of customer interactions are projected to be AI-powered by 2025 according to G2’s chatbot statistics roundup. The same source notes that chatbots can boost conversions 3x over traditional forms. Founders shouldn’t read that as “everyone needs a bot.” They should read it as “the quality of implementation now matters.”

A bad bot creates friction faster than no bot. It mishandles intent, hides your support team behind clumsy flows, and trains customers not to trust automated interactions from your brand. A good bot does the opposite. It shortens the path from question to action and makes your company look operationally mature.

The real founder question

The useful question isn’t “Should we add AI to customer support?”

It’s this: What kind of software asset are we commissioning, and will it still make sense after the next round of growth, the next product launch, and the next investor diligence process?

That changes how you evaluate chatbots development services. You stop asking only about launch speed and monthly cost. You start asking about:

System ownership: Who controls the codebase, prompts, integrations, and data layer?

Scalability: Can the architecture support more channels, products, and use cases without a rewrite?

Investor readiness: Will a technical reviewer see structured engineering decisions or a pile of brittle automations?

Defensibility: Does the bot get smarter from your proprietary data and workflows, or can any competitor clone it with the same public model?

A founder who treats a chatbot as a throwaway feature usually pays for it twice. Once at launch, and again when the company needs to rebuild it properly.

The upside is substantial if you make the right call. The right chatbot doesn’t just reduce repetitive work. It can qualify leads, accelerate buying decisions, surface product insights, and create a valuable operational dataset around customer language, objections, and intent. That is much closer to a technical asset than a support widget.

The Three Tiers of Chatbot Intelligence

A founder approves a chatbot after a polished demo. Three months later, the bot mishandles pricing questions, fails on account-specific requests, and cannot explain how it reached an answer during diligence. That is not a support issue. It is an engineering mistake.

Use a three-tier model to avoid that mistake. Each tier implies a different level of system design, operating risk, and asset value.

Rule-based is the script follower

Rule-based chatbots work like decision trees with a chat interface. They follow predefined flows, return fixed answers, and push users down approved paths.

This tier fits narrow jobs with low ambiguity. Appointment routing, FAQ triage, form intake, and simple lead capture are good examples. If the process rarely changes and failure has limited business impact, a rule-based bot can be the right call.

Its strength is control. Its weakness is fragility.

Users do not follow scripts. They change wording, ask two things at once, skip steps, and bring context from earlier conversations. A rule-based bot starts breaking the moment the conversation stops matching the flow you designed.

My recommendation is simple. Use this tier only when the bot supports a tightly bounded workflow and you are comfortable treating it as utility software, not a strategic asset.

NLP and ML is the smart assistant

The second tier handles language with more competence. Instead of mapping every path manually, it classifies intent, extracts entities, tracks context, and routes requests with better judgment.

That matters when users ask messy, real questions. A customer might say, “I need to change my shipment and send the invoice to finance.” A stronger NLP layer can recognize multiple intents, retain context, and trigger the right downstream actions instead of forcing the user into a menu.

This tier usually gives startups the first meaningful product lift. The bot stops acting like a scripted receptionist and starts acting like an operator that can interpret requests reliably. It still needs training data, review loops, and clear business logic. But if your company has recurring support patterns, structured service flows, or qualification logic, this can become a useful operating system for customer interactions.

It can also be a sensible midpoint for teams deciding between a fast build and a more ambitious architecture. If you are weighing delivery speed, ownership, and extensibility, this breakdown of application development models for startup product decisions gives a practical frame for the tradeoff.

LLM and RAG is the domain expert

This is the tier that can become part of your company’s technical moat. It is also the tier where founders waste money if they buy a demo instead of a system.

An LLM can generate natural responses and handle ambiguity well. That alone does not make it useful for your business. A generic model without retrieval, permissions, and system integrations is still guessing. It may sound confident while being wrong, inconsistent, or unsafe.

A serious implementation grounds the model in your business context. It retrieves approved information from product docs, internal policies, CRM records, knowledge bases, and application data before generating an answer. If you want a plain-language overview of how broad-answering systems differ from domain-grounded systems, see this open domain chatbot guide.

For founders, the key question is not whether an LLM sounds impressive. The question is whether the bot is being engineered into a durable asset that survives product changes, audit requests, and investor scrutiny. Many guides explain enterprise use cases but overlook the core founder problem: building a production-grade, investor-ready chatbot from the start so you do not pay for a rebuild later, a gap highlighted in this industry analysis on the startup angle missing from chatbot guidance.

If the bot cannot access your approved business context, it is not a domain expert. It is a fluent guesser.

Chatbot tiers compared

Attribute | Rule-Based (The Script Follower) | NLP/ML-Powered (The Smart Assistant) | LLM-Driven / RAG (The Domain Expert) |

|---|---|---|---|

Core behavior | Follows predefined flows | Classifies intent and manages context | Generates answers grounded in retrieved business data |

Best for | Simple FAQs, routing, lead capture | Support triage, multi-intent queries, structured service flows | Knowledge-heavy support, sales enablement, complex internal workflows |

Main strength | Predictable and controlled | Better language understanding | High flexibility plus business-specific answers |

Main weakness | Breaks outside the script | Needs training data and ongoing tuning | Requires serious architecture, retrieval design, and governance |

Founder implication | Cheap entry point, limited moat | Stronger product value, better user experience | Highest strategic upside if built as infrastructure, not a demo |

Here is the recommendation I give founders. Match the tier to the consequence of failure. If the bot touches revenue, onboarding, account actions, compliance-sensitive information, or proprietary knowledge, commission it like product infrastructure. That is how a chatbot starts increasing company value instead of creating future rework.

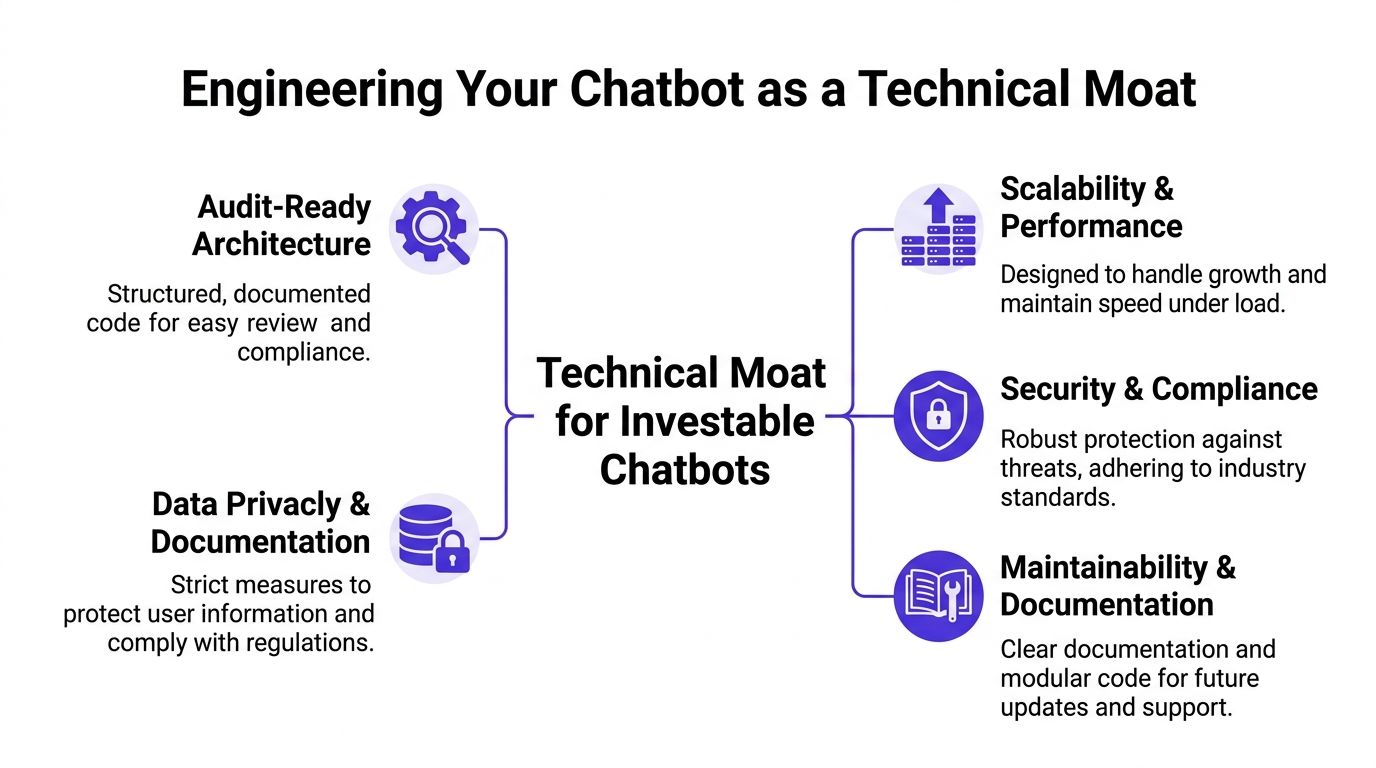

Engineering Your Chatbot as a Technical Moat

Most chatbot projects fail at the architecture layer, not the interface layer. The conversation UI looks fine. The demo works. Then the system meets real users, real edge cases, and real operational demands.

That’s why chatbots development services should be evaluated like product engineering, not campaign work.

Start with architecture, not prompts

Prompts matter. Architecture matters more.

An investable chatbot needs a backend that can authenticate users, call internal services, retrieve domain knowledge, log conversations, manage fallbacks, and support controlled iteration. That usually means combining application services, a model layer, a retrieval pipeline, analytics, and secure integrations into one system that behaves predictably under load.

I’d expect a serious team to define at least these layers early:

Application layer: Handles session logic, permissions, routing, and business rules. Common stacks include Node.js or Python services.

Knowledge layer: Stores approved documents, policies, product content, and structured records for retrieval.

Inference layer: Manages model selection, prompting, guardrails, and response generation.

Integration layer: Connects the bot to Salesforce, HubSpot, Stripe, Zendesk, internal APIs, or your own SaaS backend.

Observability layer: Tracks user intents, failures, escalations, latency, and model output quality.

Founders who want a useful primer on broader conversational behavior beyond narrow scripted bots should review this open domain chatbot guide. It’s a helpful context piece because it makes clear how fast complexity rises once you move from basic flows to open-ended interaction.

RAG is where the moat starts

The strongest technical moat usually appears when the chatbot can answer with your company’s proprietary context.

According to Quickchat’s guide to chatbot development services, RAG pipelines can reduce hallucination rates by 40% to 60% compared to vanilla LLMs, and RAG-enhanced bots achieve 85% to 95% factual precision on internal knowledge bases. That’s the difference between a flashy interface and a system a business can trust.

The point isn’t the acronym. The point is ownership.

If your bot retrieves from your support corpus, internal product documentation, CRM records, billing logic, workflow states, and customer history, then every improvement compounds around your business. Competitors can license the same frontier model. They can’t license your cleaned knowledge base, your integration design, your escalation logic, or your proprietary conversational data.

Production quality for an MVP still matters

Founders often hear “it’s just an MVP” as an excuse for weak engineering. Ignore that advice.

An MVP should narrow scope, not lower standards. You can reduce channels, trim use cases, or limit integrations. But the codebase still needs structure, documentation, deployment discipline, and security boundaries. Otherwise you create an expensive rewrite trigger.

A practical way to understand this:

Constrain the first use case. Don’t launch across support, sales, onboarding, and internal ops at once.

Build the backend as if it will expand. Modular services, clear APIs, and reliable logging are essential.

Own the data contracts. If the bot talks to customer systems, define what gets read, written, and audited.

Design for handoff. Human escalation should be clean, with context passed forward.

Treat evaluation as engineering. Test retrieval quality, unsafe outputs, failure paths, and integration resilience.

Practical rule: A chatbot becomes a technical moat when its hardest parts sit below the UI. Data pipelines, retrieval quality, integration depth, and governance create the value.

If you’re comparing delivery approaches, this overview of application development models for startup products is useful because chatbot architecture choices are rarely isolated. They sit inside larger product and team decisions.

What audit-ready looks like in practice

Investors and technical reviewers won’t care that your bot feels modern if the internals are a mess.

They care whether the system is maintainable, documented, secure, and logically designed. They care whether third-party dependency choices make sense. They care whether the chatbot can be extended without brittle rewrites. They care whether data handling creates legal or operational risk.

An audit-ready chatbot usually shows its quality through artifacts, not adjectives:

Architecture diagrams with data flow and service boundaries

Documented repositories with sensible module separation

Access controls for admin tools, knowledge ingestion, and production systems

Evaluation workflows for testing prompts, retrieval, and handoff behavior

Deployment pipelines that support repeatable release management

That’s what turns a chatbot into infrastructure.

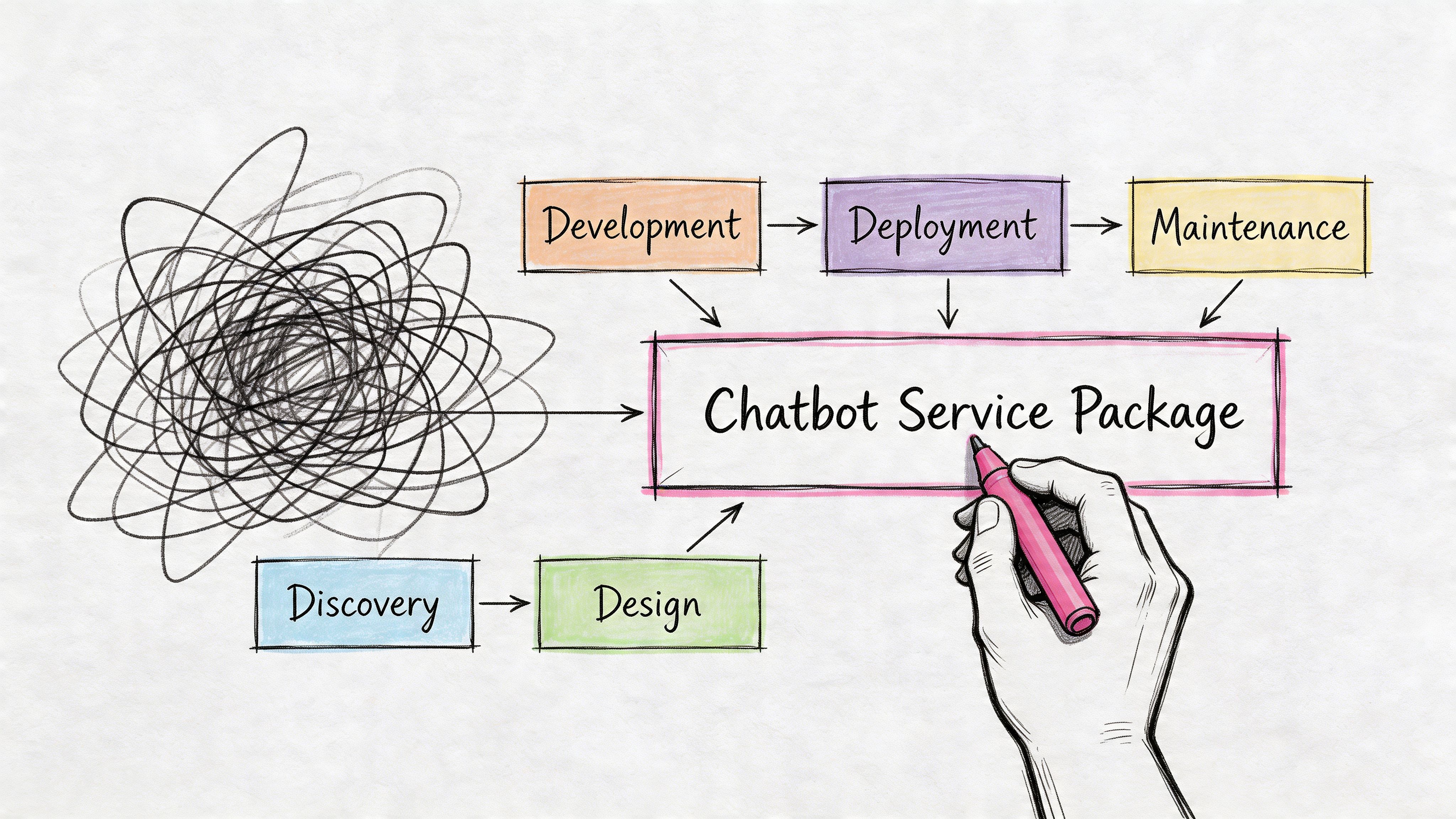

Decoding Chatbot Development Service Packages

Most proposals for chatbots development services are vague on purpose. They sell outcomes without showing the work product. That’s a problem because founders can’t judge quality if the scope hides the actual deliverables.

A strong engagement is usually built in phases. Not because agencies like process diagrams, but because each phase reduces a different kind of risk.

Product design and discovery

This phase should produce clarity, not presentations.

You want the team to map the target user journeys, define the first high-value use cases, specify escalation rules, and decide what systems the bot must touch. If discovery doesn’t include architecture decisions, it’s incomplete.

Ask for deliverables like:

Use-case prioritization: Which conversations matter first, and which are being deferred

Conversation blueprints: Core flows, edge cases, fallback behavior, and handoff rules

System map: Required integrations with CRM, ticketing, billing, authentication, or internal data stores

Risk register: Security, compliance, and operational failure points

Technical blueprint: Stack choices, deployment model, knowledge architecture, and ownership boundaries

Production-grade MVP development

Weak vendors often reveal their limitations. They’ll promise a polished front end and skip the disciplines that make the product durable.

What you should expect instead is a build phase that delivers an auditable codebase, integration reliability, and operational controls. The chatbot UI is only one output.

A credible MVP package usually includes:

Backend services for orchestration, session handling, and API calls

Prompt and response management with controlled versioning

Knowledge ingestion pipeline for docs, FAQs, and structured records

Admin controls so your team can update approved content without engineering bottlenecks

Testing coverage across functional paths, edge cases, and escalation logic

CI/CD setup so releases aren’t manual chaos

A proposal that spends pages on “AI capabilities” and almost nothing on deployment, logging, and failure handling is a branding document, not an engineering plan.

Scaling and strategic leadership

After launch, founders usually hit a new set of problems. The bot needs to support more channels, product lines, geographies, or internal teams. In these circumstances, one-off builders disappear and strategic partners become obvious.

The third phase should include leadership as much as implementation. Not broad “advisory,” but concrete technical direction.

Look for post-launch scope like this:

Phase | What good looks like |

|---|---|

Discovery | Journey maps, architecture decisions, integration scope, risk analysis |

MVP build | Auditable codebase, tested flows, secure integrations, deployment pipeline |

Scaling | Managed infrastructure, analytics reviews, roadmap decisions, governance updates |

The best packages also define who owns the system over time. That includes code repositories, cloud resources, model settings, vector stores if used, and documentation. If ownership is muddy, your influence diminishes.

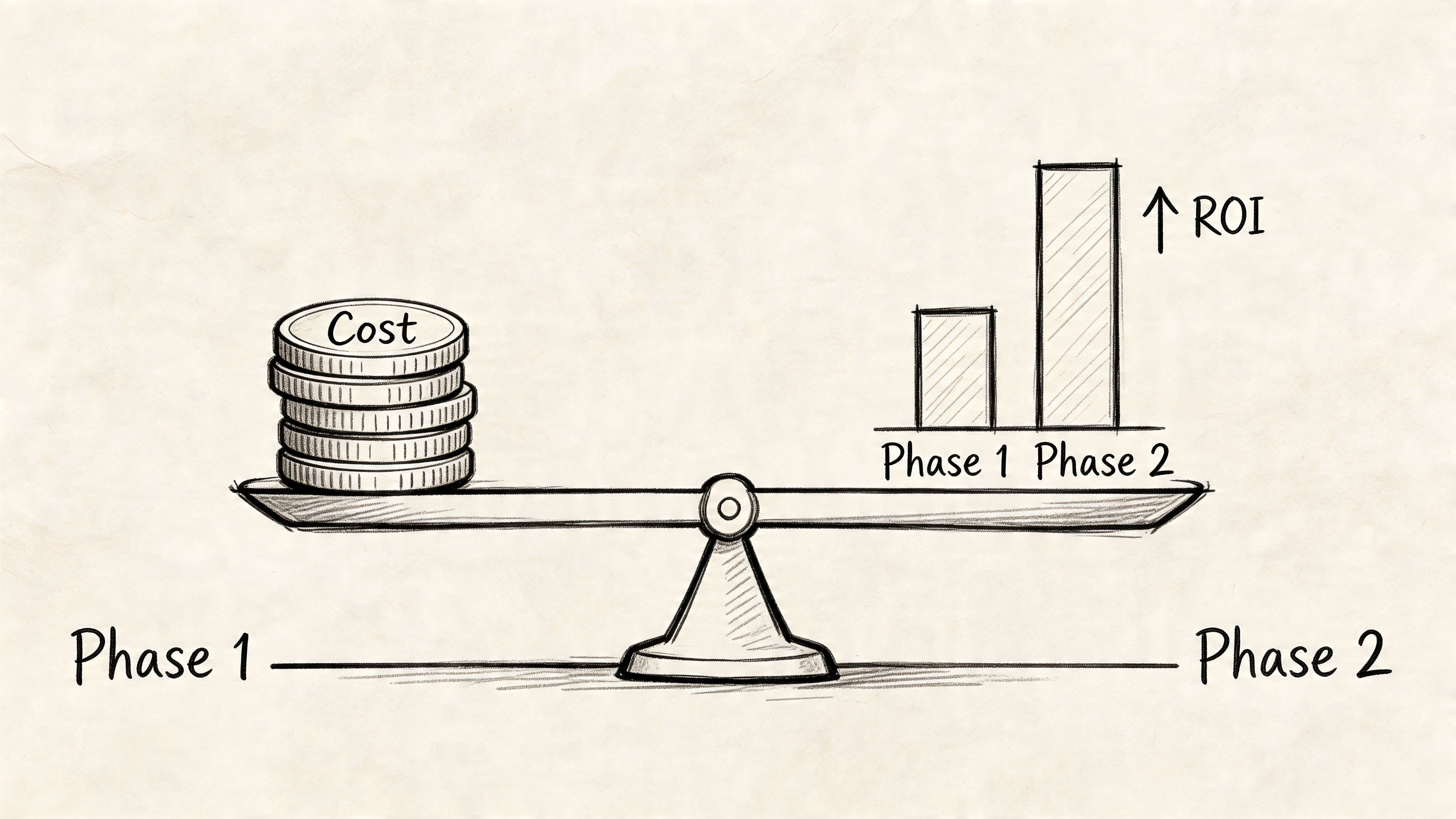

Budgeting for an Investor-Ready Chatbot

Cheap chatbot builds are expensive in the worst way. They look affordable at procurement time and become liabilities during growth.

The broader market size alone tells you this category has matured. The global chatbot market reached approximately $9.56 billion in 2025, according to Exploding Topics’ chatbot statistics summary. More important for founders, that source notes that investor-ready MVP development costs can range from $5,000 to $500,000. That range is wide because “chatbot” can mean anything from a simple scripted widget to a heavily integrated product layer.

Where the budget actually goes

Founders often focus on the visible layer. That’s usually the wrong place to optimize.

The same source breaks out major cost components for development. Key allocations include planning at $3,000 to $8,000, UX/UI design at $10,000 to $20,000, front-end development at $12,000 to $30,000, back-end development at $18,000 to $35,000, integration work at $8,000 to $20,000, testing at $3,000 to $10,000, deployment at $4,000 to $12,000, and annual maintenance at $8,000 to $20,000.

That budget profile says something important. The highest-value work isn’t cosmetic. It sits in the backend, integrations, testing, and deployment discipline. That’s exactly where technical debt either gets prevented or invited in.

Where founders underinvest

I see four recurring mistakes.

They underfund backend architecture. Then the bot can’t reliably connect to product data, account states, or customer systems.

They skimp on integrations. Then the bot becomes informational only, instead of operational.

They treat testing as optional. Then edge cases surface in front of customers.

They ignore maintenance. Then model behavior, content freshness, and service dependencies drift.

A founder shouldn’t ask, “What’s the cheapest chatbot we can ship?” The right question is, “What level of engineering turns this into an asset we can build on?”

My budgeting advice

If the chatbot affects acquisition, conversion, onboarding, support quality, or account operations, fund it like product infrastructure. Constrain scope if needed, but don’t strip out the engineering bones.

A sensible budget discussion should separate:

Must-have asset-building work, such as architecture, secure integrations, testing, and documentation

Optional enhancement work, such as additional channels, richer analytics, or advanced workflow automation

Deferred expansion paths, which can wait until usage patterns justify them

The right chatbot budget doesn’t buy “AI.” It buys sound architecture, reliable data access, and a codebase you won’t be embarrassed to show during diligence.

If a vendor’s number looks unusually low, assume they’re excluding the work that preserves valuation.

Measuring Success and ROI for Valuation

A founder walks into a board meeting saying the chatbot cut support tickets. An investor’s next question is the one that matters. Did it improve conversion, retention, operating margin, or product defensibility?

That is the standard.

Founders often inherit the wrong dashboard. They get containment, deflection, and session volume, then treat those numbers as proof of ROI. Those are operating metrics. Valuation comes from showing that the system improves a business process and produces proprietary data, workflows, and infrastructure your company owns.

Measure the asset, not just the activity

Start with technical quality because bad classification poisons every downstream metric. If the bot routes people into the wrong flows, your conversion data gets distorted, your escalation data becomes misleading, and your product team learns the wrong lessons.

As noted earlier, strong intent accuracy and resolution performance matter. They are not the headline. The headline is whether the chatbot makes the company more investable.

Track the chain all the way through:

Conversation to pipeline: Which chatbot sessions turn into qualified opportunities, not just captured leads

Conversation to activation: Whether users reach the first meaningful product action faster after bot guidance

Conversation to retention: Whether the bot reduces churn risk by resolving onboarding, billing, or usage confusion before it becomes a support issue

Conversation to product insight: Which repeated questions expose missing features, weak docs, or broken flows

Conversation to proprietary asset growth: Whether transcripts, intents, and workflow data are improving your internal taxonomy, automations, and knowledge systems

That last point gets ignored too often. A good chatbot does not just answer questions. It generates labeled interaction data, exposes friction in your funnel, and creates reusable logic across support, onboarding, and sales. That is how a bot becomes part of your technical moat.

Pair each operational metric with a valuation metric

Never report a single chatbot KPI in isolation. Busy bots can still destroy trust.

If engagement rises because users are confused, session count is a warning sign, not a win. If containment rises because the bot stonewalls edge cases, the metric looks clean while customer sentiment gets worse. Use paired metrics that force honesty.

Operational metric | Valuation-oriented companion metric |

|---|---|

Containment rate | Successful outcome rate from contained conversations |

Resolution rate | Improvement at a specific funnel or onboarding step |

Intent accuracy | Lift in conversion, activation, or renewal after intervention |

Escalation rate | Time to successful human resolution with full context preserved |

Bot usage volume | Share of high-value journeys influenced by the chatbot |

This is the same discipline founders need when building an investor-ready MVP. Activity is not proof. Asset value is proof.

What I would put in a board deck

Keep it simple. Show three layers.

First, prove the bot works technically. Show accuracy trends, failure clusters, escalation quality, and coverage of key intents.

Second, prove it improves a business function. Show that the chatbot shortens sales cycles, increases activation, reduces support handling cost, improves retention signals, or raises expansion readiness.

Third, prove it compounds. Show that each month of usage improves your knowledge base, intent library, workflow coverage, and customer intelligence. Investors pay more for systems that get stronger with use.

If you are comparing vendors, ask how their chatbot development service handles analytics, event mapping, and data ownership after launch. If they cannot explain how chatbot outputs connect to revenue, retention, and product learning, they are building a feature, not an asset.

A founder should be able to answer one question in one sentence: what business process is stronger, more defensible, and more measurable because this chatbot exists?

If you cannot answer that clearly, you do not have valuation-grade ROI yet.

The Founder's Vendor Selection Checklist

Vendor choice will determine whether your chatbot becomes an asset or technical debt. Founders often compare firms on speed, price, and interface quality. Those matter, but they don’t tell you who can build a durable system.

The fastest way to separate strategic engineering partners from commodity shops is to ask harder questions.

Questions that expose weak vendors

Ask these directly, and don’t accept vague answers.

How do you prevent technical debt in the MVP? If they answer with generic “best practices,” keep pushing. You want specifics on architecture, modularity, testing, and documentation.

What does audit-ready mean in your process? They should talk about repositories, deployment, logs, access controls, and system diagrams.

How do you handle knowledge updates and prompt changes after launch? If every content update requires a developer, the operating model is weak.

What’s your escalation design? A serious team plans for uncertainty and smooth human handoff.

Who owns the code, cloud resources, and data artifacts? Ownership ambiguity is a future crisis.

If you want a useful example of how service firms describe their offers, reviewing a public chatbot development service page can help you compare packaging language against the deeper technical questions above. Use resources like that as a screening tool, not as a substitute for diligence.

What good answers sound like

A strong vendor usually explains tradeoffs clearly. They won’t oversell autonomous magic. They’ll discuss constraints, governance, and where they’d narrow scope first.

Look for evidence of these capabilities:

Fractional CTO thinking: They can connect technical choices to fundraising, hiring, roadmap sequencing, and long-term ownership.

Engineering depth: They can discuss stack decisions, observability, integration patterns, and deployment discipline without hiding behind AI buzzwords.

Security awareness: They bring up data access, permissions, compliance boundaries, and operational risk early.

Product judgment: They know when not to automate, and they can explain why.

Red flags founders should treat seriously

Some red flags are easy to miss because they sound efficient.

They lead with templates. Reusable accelerators are fine. Template thinking is not.

They obsess over prompts and ignore systems. That usually means weak backend capability.

They can’t explain handoff logic. That means they haven’t designed for real-world failure.

They won’t define deliverables in artifacts. If you can’t list what you’ll receive, you can’t govern the work.

They avoid strategic accountability. If no one is thinking like a technical leader, the project drifts into feature work.

Good chatbots development services don’t just ship a bot. They help the founder make durable technical decisions the company can live with.

The final screening move

Ask every vendor the same closing question: “If we raise and need to scale this fast, what parts of your build will hold up, and what parts will need redesign?”

The wrong firm will promise everything holds up. The right one will answer with nuance, constraints, and a coherent roadmap. That honesty is usually a better indicator of competence than any polished demo.

Conclusion Your Chatbot Is Your Asset

A startup chatbot shouldn’t be treated like a side feature. It touches customer communication, product workflow, internal knowledge, and operational trust. That makes it a strategic engineering decision.

The right build starts with choosing the right intelligence tier, then getting the architecture right, budgeting for the parts that preserve value, measuring outcomes that matter to investors, and selecting a partner who thinks like an architect instead of a task vendor.

Founders who approach chatbots development services this way end up with more than automation. They end up with a stronger product surface, cleaner internal systems, better data, and a codebase that supports growth instead of slowing it down.

If you’re building a chatbot and want it engineered as an investor-ready asset instead of a disposable feature, Buttercloud helps founders turn product ideas into production-grade MVPs with the ar...