The Founder's Guide: 10 Cloud Cost Optimization Best Practices for 2026

For a founder, your cloud infrastructure isn't just a technical utility; it's a direct reflection of your company's operational discipline and a critical line item under investor scrutiny. Bloated cloud spend signals a lack of engineering rigor and can erode valuation before you even get to your Series A. It’s a common trap: in the race to build an MVP, founders overprovision, neglect monitoring, and accumulate technical debt that manifests as a runaway monthly bill.

This isn't just about saving a few dollars. It's about engineering a technical asset that's as financially efficient as it is scalable. True investor-readiness means demonstrating that every dollar spent on infrastructure generates a predictable return, turning your cloud architecture into a key part of your technical moat. To truly treat your cloud bill as a valuation metric, it's vital to implement actionable IT cost optimization strategies that align engineering with financial goals.

This guide moves beyond generic advice. We'll break down the 10 essential cloud cost optimization best practices that transform your infrastructure from a liability into a core component of your company's valuation. These are the specific, actionable tactics we implement to ensure our partners build audit-ready products. You will learn how to master:

Commitment discounts and right-sizing

Dynamic resource allocation and spot instances

Storage, network, and container efficiency

Database optimization and serverless architecture

Crucial monitoring and tagging strategies

Each practice is designed to provide practical implementation details, helping you build an investor-ready product built on both performance and fiscal prudence.

1. Reserved Instances & Commitment Discounts

One of the most direct and impactful cloud cost optimization best practices involves shifting predictable, baseline workloads from on-demand pricing to commitment-based discounts. Cloud providers like AWS (Reserved Instances, Savings Plans), Google Cloud (Committed Use Discounts), and Azure (Reservations) offer substantial savings, often 30-70%, in exchange for a one or three-year commitment to a specific amount of compute or database capacity. For founders with a stable production environment, this is a foundational strategy to reduce operational burn.

The key is to reserve only the capacity you are certain you will use 24/7. This “always-on” baseline represents your core production infrastructure, not the spiky, variable traffic that can be handled by on-demand instances. Committing to this predictable portion of your workload transforms a variable operational expense into a predictable, lower fixed cost.

Actionable Implementation

Analyze Baseline Usage: Before purchasing, use tools like AWS Cost Explorer or Azure Cost Management to analyze at least 30-60 days of usage data. Identify the lowest, consistent level of compute usage for a specific instance family (e.g., your baseline is always at least 8

t3.mediuminstances).Start with Shorter Terms: For post-MVP startups, begin with 1-year commitments. This provides significant savings without locking you into a long-term plan that might not fit your architecture in 24 months.

Combine with On-Demand: Reserve only your predictable minimum. Use on-demand instances, managed by auto-scaling groups, to handle traffic spikes. This hybrid model delivers the best of both worlds: low costs for your base load and flexibility for variable demand.

A common scenario for a venture-backed SaaS company post-Series A is to lock in 3-year commitments for their production database and core application servers. This move immediately improves their gross margin and demonstrates fiscal discipline to their board and future investors. Understanding these financial levers is critical, and a detailed cost analysis can help you model these scenarios. To get a better sense of how infrastructure choices affect your budget, exploring an app development cost calculator can provide valuable perspective on early-stage financial planning.

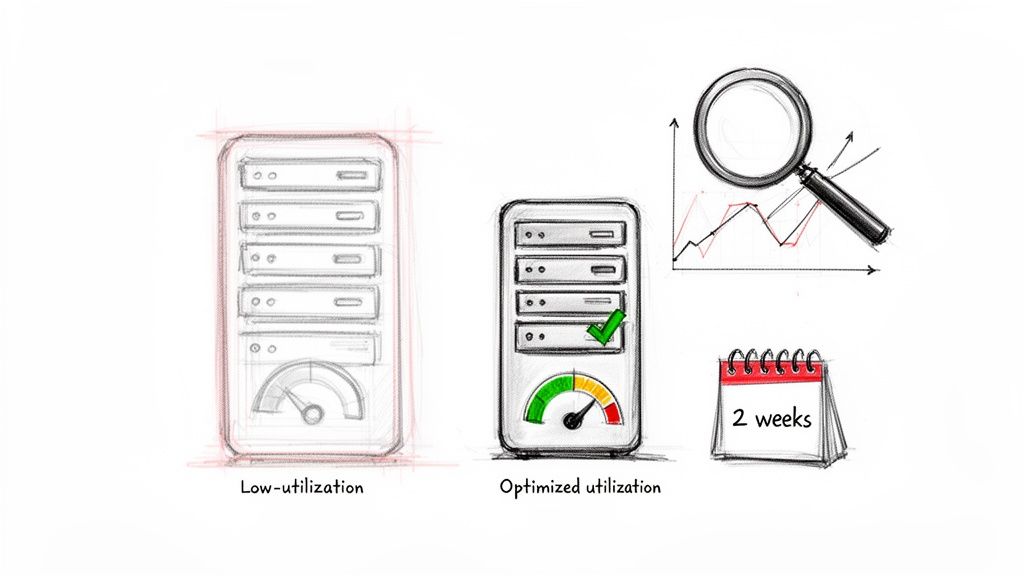

2. Right-Sizing Instances & Continuous Monitoring

One of the most common drains on a startup's runway is over-provisioning infrastructure. Right-sizing is the practice of methodically analyzing actual resource utilization—CPU, memory, and network I/O—to match instance types directly to real workload demands. This approach corrects the frequent early-stage mistake of launching oversized resources "just in case" and ensures you pay only for the capacity you truly need.

This isn't a one-time fix but a continuous process of observation and adjustment. By constantly monitoring performance metrics, you can identify and eliminate waste, turning a bloated infrastructure into a lean, efficient technical asset. For example, an early-stage SaaS startup might discover its web tier is running on t3.large instances when performance data shows that t3.small instances would suffice, instantly cutting compute costs for that service by 75%.

Actionable Implementation

Establish a Monitoring Baseline: Before making any changes, use native tools like AWS Compute Optimizer or third-party platforms like Datadog to gather at least two to four weeks of utilization data. This period should capture typical traffic peaks and troughs to inform your decisions accurately.

Automate Recommendations: Configure your monitoring tools to provide automated right-sizing recommendations. These services analyze performance data and suggest more cost-effective instance types or sizes that meet your application's needs without compromising performance.

Schedule Regular Reviews: Integrate right-sizing into your team's operational rhythm. Make it a recurring task in your monthly or bi-weekly engineering review to assess underutilized resources and act on recommendations.

Implement During Low-Traffic Windows: Plan any instance changes during off-peak hours. This minimizes potential disruption to your users and allows you to validate the performance of the new, smaller instances under light load before peak traffic returns.

For a startup building its MVP, the initial focus is speed, often leading to over-provisioned infrastructure that becomes a technical and financial liability. We’ve seen AI platforms realize that costly GPU-accelerated machines were being used for simple preprocessing tasks that non-GPU instances could handle. Correcting this architectural misstep is a key part of building an investor-ready product that demonstrates both technical capability and financial discipline.

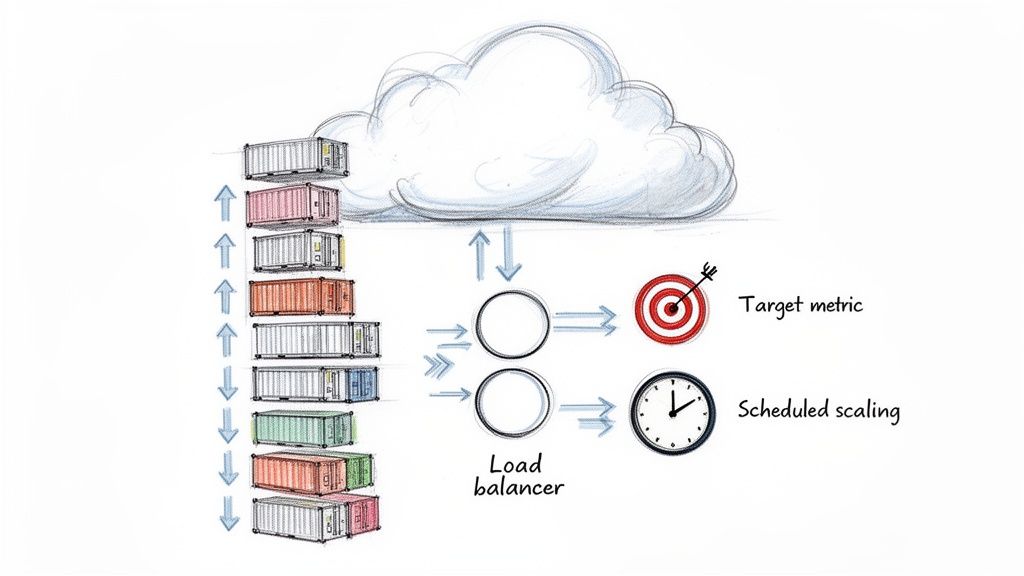

3. Auto-Scaling & Dynamic Resource Allocation

Overprovisioning is one of the most common drains on a startup's cloud budget. Auto-scaling directly counters this by dynamically adjusting compute, container, and database resources to match real-time demand. Instead of paying for peak capacity 24/7, you engineer an environment that scales up to meet demand surges and, just as importantly, scales down during quiet periods, ensuring you only pay for what you use.

This practice moves infrastructure management from a static, manual task to an automated, intelligent system. For an e-commerce MVP experiencing a promotional spike or an AI chatbot handling fluctuating request volumes, auto-scaling maintains performance without manual DevOps intervention, aligning operational costs directly with user activity and revenue. This level of efficiency is a key component of building a scalable, audit-ready product.

Actionable Implementation

Start with Target Tracking: Begin with target tracking policies (e.g., maintain an average CPU utilization of 60%). These are simpler to configure than step scaling and provide excellent results for most common workloads, like web servers or API endpoints.

Define Smart Scale-Down Rules: A common mistake is focusing only on scaling up. Implement aggressive but safe scale-down policies to shrink your infrastructure footprint as soon as traffic subsides, preventing unnecessary costs.

Use Scheduled Scaling for Predictable Patterns: If you know your B2B SaaS platform has a usage surge every weekday at 9 AM for reporting, use scheduled scaling to pre-provision capacity. This improves user experience and is more cost-effective than waiting for reactive metrics to trigger a scale-up event.

A critical mistake for early-stage founders is scaling the primary production database. This is expensive, risky, and causes downtime. Instead, offload read-heavy traffic to auto-scaling groups of read replicas and implement a robust caching layer (like Redis or Memcached) to absorb repetitive queries. This architectural decision not only saves money but is a foundational element of a resilient system, often examined during VC technical due diligence. Expertise in these architectural patterns is a hallmark of strong cloud migration consulting services that focus on building investor-ready infrastructure.

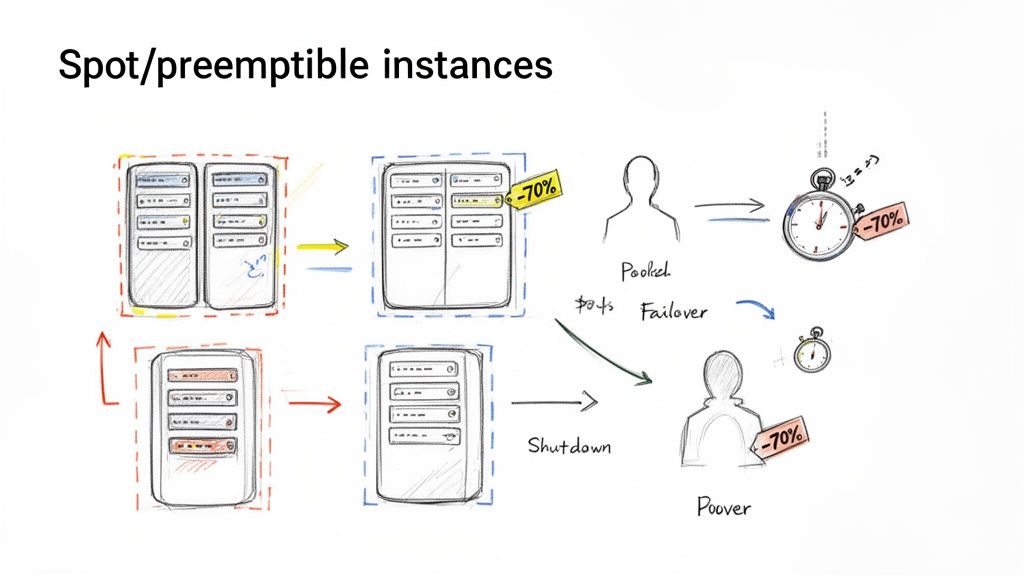

4. Spot Instances & Preemptible VMs for Non-Critical Workloads

For workloads that can tolerate interruption, tapping into spare cloud provider capacity is one of the most powerful cloud cost optimization best practices available. Providers like AWS (Spot Instances), Google Cloud (Preemptible VMs), and Azure (Spot VMs) sell this unused capacity at steep discounts, often reaching 70-90% off on-demand prices. This strategy is ideal for fault-tolerant applications like batch processing, data analysis, machine learning training, and even development or staging environments.

These instances can be reclaimed by the cloud provider with little notice, making them unsuitable for critical, customer-facing services. However, for the right type of workload, they present a massive cost-saving opportunity. Instead of paying a premium for constant availability, you engineer your application to be resilient to interruptions, transforming a significant operational cost into a much smaller, variable one.

Actionable Implementation

Identify Interruptible Workloads: Analyze your architecture for processes that are stateless or can easily resume from a checkpoint. Good candidates include background report generation, video transcoding, scientific simulations, or CI/CD build agents.

Diversify Instance Pools: Don't rely on a single instance type. Configure your Spot requests across multiple instance families and sizes (e.g.,

m5.large,c5.large,r5.large). This strategy, often called a "fleet," dramatically reduces the chance of a widespread interruption affecting your workload.Automate and Handle Terminations: Implement logic to gracefully handle termination notices. This involves saving state, draining connections, and ensuring your orchestration system (like Kubernetes or an auto-scaling group) automatically requests replacement instances to continue the job.

A common use case for a data-intensive startup is running large-scale machine learning training jobs. An AI company could run its model training on a fleet of Spot GPU instances overnight, saving tens of thousands of dollars per month compared to on-demand pricing. This allows them to iterate faster and run more experiments within their funding constraints, turning infrastructure cost from a bottleneck into a competitive advantage.

5. Data Storage Optimization & Lifecycle Policies

A significant and often overlooked portion of a founder's cloud bill comes from data storage. As applications scale, logs, backups, and user-generated content accumulate, leading to silently escalating costs. Implementing data storage optimization and automated lifecycle policies is a critical practice for moving data to more cost-effective storage tiers based on access patterns, directly impacting your operational spend. Providers like AWS and Google Cloud offer powerful, automated tools to manage this process.

This strategy involves classifying data by its access frequency and business value over time. For instance, data that is frequently accessed needs to be on high-performance, higher-cost storage (like Amazon S3 Standard). However, as that data ages and is accessed less, it can be automatically transitioned to cheaper tiers, such as Infrequent Access, Glacier, or Deep Archive, reducing storage costs by up to 90% or more. This is a foundational element of a mature FinOps culture.

Actionable Implementation

Analyze Access Patterns: Use tools like AWS S3 Storage Lens to get a clear picture of your data access patterns. This analysis will reveal which data sets are "hot" (frequently accessed) versus "cold" (rarely accessed), informing your tiering strategy without guesswork.

Implement Automated Lifecycle Policies: Configure rules to automatically transition objects between storage classes. For a SaaS platform, customer backups might move from S3 Standard to Glacier Deep Archive after 90 days. For an analytics product, query results older than one year can be automatically archived.

Use Intelligent Tiering for Unpredictable Data: For datasets with unknown or changing access patterns, services like S3 Intelligent-Tiering automatically move data between frequent and infrequent access tiers based on real-time usage. This delivers savings without requiring manual analysis.

Compress and Clean Up: Before storing data, especially backups and logs, compress it. This simple step can reduce the storage footprint by over 80%. Additionally, set lifecycle rules to permanently delete temporary files or expired logs, as undeleted data is a common source of cost leakage.

Many early-stage startups find that over 50% of their storage bill is from logs that are never accessed again. A SaaS company we partnered with was spending thousands on storing raw application logs. By implementing a simple policy to transition logs to Infrequent Access after 30 days and then to Glacier after 90 days, they cut their log storage costs by 70%, freeing up capital that was reinvested into product development. This demonstrates how operational discipline directly fuels growth.

6. Network & Data Transfer Cost Optimization

Often overlooked until they become a major expense, network and data transfer costs can silently erode your margins. Cloud providers typically charge for data leaving their network (egress), meaning every API response, file download, or cross-region database sync can add up. One of the most critical cloud cost optimization best practices is architecting your system to minimize these charges from day one, treating data transfer as a first-class citizen in your cost model.

Controlling egress costs involves making deliberate architectural choices. This means keeping data transfers internal to a provider's region whenever possible, using Content Delivery Networks (CDNs) to serve assets from the edge, and optimizing the very size of the data packets you send. For a founder engineering an investor-ready product, demonstrating control over these variable costs signals a mature approach to scalable infrastructure.

Actionable Implementation

Measure Egress Hotspots: Before optimizing, you must measure. Use provider tools like AWS Cost and Usage Report (CUR) with Amazon Athena or Google Cloud's detailed billing export to pinpoint which services and regions are generating the highest egress fees. Often, the culprit is an S3 bucket serving media or a database replicating across regions unnecessarily.

Implement a CDN Early: For any application serving static assets (images, CSS, JavaScript) or video, a CDN like Amazon CloudFront or Cloudflare is non-negotiable. By caching content closer to users, a CDN drastically reduces requests hitting your origin servers and, more importantly, the expensive egress bandwidth from your primary region. A video platform can cut bandwidth costs by over 60% this way.

Use Internal Endpoints: When services within your cloud environment need to communicate (e.g., an EC2 instance accessing an S3 bucket), use VPC endpoints (like AWS PrivateLink). This routes traffic over the provider's private network instead of the public internet, completely eliminating data transfer charges for that traffic.

A common mistake for early-stage startups is to build a monolithic application in one region and then serve a global user base directly from it. The resulting egress charges can be staggering. A better approach is to deploy regional endpoints or read replicas for your database in high-traffic geographies, ensuring that data travels the shortest, most cost-effective path to the user. This demonstrates foresight and builds a technical moat around operational efficiency.

7. Container & Orchestration Efficiency (ECS/EKS Cost Optimization)

As startups increasingly adopt microservices and containerized architectures, controlling the costs of orchestration platforms like Amazon ECS and Kubernetes (EKS) becomes a critical discipline. Effective cloud cost optimization here moves beyond the virtual machine level to the individual container. By precisely defining resource needs and using flexible compute options, you can run the same workloads on a fraction of the infrastructure, directly lowering your cloud bill.

This strategy involves treating your container compute layer not as a fixed cost but as a dynamic resource pool. The goal is to pack workloads efficiently (bin packing) onto the fewest possible nodes without compromising performance or availability. For a founder engineering an investor-ready product, demonstrating this level of operational maturity signals a strong command of scalable, cost-effective architecture.

Actionable Implementation

Define Accurate Resource Requests/Limits: Analyze your container's actual CPU and memory usage with monitoring tools. Set Kubernetes requests (the minimum reserved) and limits (the hard cap) based on this data, not guesswork. This prevents over-provisioning and allows orchestrators to schedule pods more densely.

Integrate Spot Instances: For fault-tolerant or non-production workloads, use Spot Instances (EC2 Spot or Fargate Spot). Orchestration tools like Karpenter or native EKS node group configurations can automatically manage the lifecycle of Spot nodes, delivering savings of up to 90% on compute for applicable workloads.

Implement Pod Disruption Budgets (PDBs): Before using Spot Instances for production-adjacent workloads, define PDBs. This Kubernetes feature ensures a minimum number of replicas are always running, allowing your application to gracefully handle the voluntary interruptions inherent to Spot Instances.

Optimize Container Images: Bloated container images increase storage costs and slow down startup times, which can impede rapid auto-scaling. Use multi-stage builds and lean base images like Alpine or distroless to create smaller, more efficient artifacts.

An AI startup running complex training models on EKS can reduce their monthly compute bill by tens of thousands of dollars by shifting those jobs to EKS node groups backed by EC2 Spot Instances. The ephemeral nature of the jobs makes them a perfect fit for Spot, converting a massive capital expense into a manageable operational one. Mastering this practice is a key component of building a cost-efficient, scalable platform.

8. Database Optimization & Right-Sizing

Databases often become a major cost center as an application scales, but they also present a significant opportunity for optimization. Treating your database as a static, over-provisioned resource is a direct path to wasted spend. A core cloud cost optimization best practice involves actively managing your database layer by right-sizing instances, adopting modern scaling architectures, and refining the queries that drive your application.

This process moves beyond simple infrastructure tweaks and into the application architecture itself. For many founders, their database is the performance bottleneck and the single largest line item on their cloud bill. By addressing this directly, you can unlock both performance gains and substantial cost reductions, improving your gross margin and making your product more scalable.

Actionable Implementation

Profile Database Usage: Before making changes, use tools like AWS RDS Performance Insights, slow query logs, or Percona Monitoring and Management to get a clear picture of your actual load. Identify peak CPU, memory usage, and I/O patterns to make data-driven decisions.

Implement Read Replicas: For read-heavy applications, scaling vertically by choosing a larger instance is often the wrong first step. Instead, offload read queries to one or more read replicas. This distributes the load and can defer a costly instance upgrade.

Optimize Inefficient Queries: Poorly written queries, especially N+1 problems common in ORMs, can drive CPU usage sky-high. Use application performance monitoring (APM) tools or query analysis to find and fix these code-level issues. A single query fix can sometimes reduce database CPU by over 60% without any infrastructure changes.

Consider Serverless for Variability: For workloads with unpredictable or spiky traffic patterns, managed serverless databases like Amazon Aurora Serverless or DynamoDB are excellent choices. An API platform moving from provisioned RDS to DynamoDB with on-demand scaling can cut its database bill significantly, sometimes by thousands per month.

For a SaaS platform experiencing unpredictable user activity, moving from a fixed-size RDS instance to Aurora Serverless can be a game-changer. This architectural shift aligns costs directly with usage, potentially cutting the database bill by over 50% by eliminating payment for idle capacity during off-peak hours. This is a strategic move that turns a fixed operational cost into a variable one that grows with your revenue.

9. Cloud Cost Monitoring, Allocation & Tagging Strategy

You cannot optimize what you cannot measure. Implementing a robust tagging and allocation strategy is the foundational step toward financial accountability in the cloud. This practice moves cost from an opaque, monolithic bill into a clear, detailed ledger, showing exactly which teams, projects, or product features are driving expenses. For a founder, this visibility is non-negotiable for calculating accurate unit economics and demonstrating fiscal control to investors.

This discipline involves systematically applying metadata tags (e.g., team:engineering, project:q4-feature, environment:production) to every cloud resource. These tags act as financial signposts, allowing tools like AWS Cost Explorer, CloudHealth, or Kubecost to filter, group, and report on spending with precision. Without this, a growing cloud bill becomes a mystery that no one can solve, let alone reduce.

Actionable Implementation

Define a Tagging Schema Early: Before significant infrastructure is deployed, create a mandatory tagging policy. Define key tags that must be applied to all resources, such as

CostCenter,Project,Owner, andEnvironment. This prevents the monumental task of retroactively tagging thousands of assets.Enforce Tagging with Policy: Use cloud-native tools like AWS IAM policies or Azure Policy to enforce your tagging schema. Configure policies to block the creation of any new resource that lacks the required tags, embedding cost awareness directly into your deployment workflow.

Implement Showback/Chargeback: Start with a "showback" model, where you regularly share detailed cost reports with team leads to build awareness. As your organization matures, evolve to a "chargeback" model, where costs are formally allocated back to departmental budgets, creating direct financial accountability.

Use Anomaly Detection: Configure cost anomaly detection services. For a growing startup, this is your early warning system. It can automatically flag a runaway batch job or a misconfigured service that might otherwise cost thousands before being noticed during a monthly review.

For a Series A SaaS platform, the ability to allocate infrastructure costs to specific customer tiers is a game-changer. By tagging resources associated with 'Free', 'Pro', and 'Enterprise' plans, the company can accurately calculate the gross margin for each tier. This data is critical for making strategic decisions about pricing, feature-gating, and sales focus, directly impacting the company's valuation and path to profitability.

10. Serverless Architecture for Event-Driven & Batch Workloads

Adopting a serverless architecture for specific workloads is a powerful cloud cost optimization best practice that directly ties infrastructure spend to actual usage. Instead of paying for idle servers, platforms like AWS Lambda, Google Cloud Functions, and Azure Functions allow you to run code in response to events, paying only for the compute time consumed down to the millisecond. This model is exceptionally effective for tasks that are intermittent or have unpredictable demand, such as API backends, webhook processors, and batch data jobs.

For founders, this approach eliminates entire categories of operational overhead. There are no servers to patch, manage, or scale. For example, a webhook processor built on AWS Lambda can automatically scale from zero to handling thousands of events per minute and then scale back to zero, incurring costs only during active processing. This pay-per-execution model is ideal for building lean, high-margin MVPs and scalable systems without committing to fixed infrastructure costs.

Actionable Implementation

Identify Ideal Workloads: Review your application for event-driven components. Good candidates include image processing triggered by S3 uploads, processing incoming data from an IoT device, or handling user authentication requests. Scheduled tasks (cron jobs) are also a perfect fit.

Optimize Function Performance: Keep functions small and focused on a single task. This reduces cold start times and makes them easier to maintain. Monitor execution duration and memory usage, as small optimizations can yield significant savings at scale.

Manage State Externally: Serverless functions are stateless. Use managed services like DynamoDB, Redis, or S3 to manage state, ensuring your architecture remains scalable and resilient without relying on a persistent server instance.

A common scenario for an early-stage SaaS is building their entire API backend on API Gateway and AWS Lambda. One of our portfolio companies supports over $50,000 in Annual Recurring Revenue with a backend infrastructure cost of less than $200 per month by using a purely serverless model. This strategy keeps their burn rate low while providing massive, automatic scalability from day one.

10-Point Cloud Cost Optimization Comparison

Solution | Implementation complexity | Resource requirements | Expected outcomes | Ideal use cases | Key advantages |

|---|---|---|---|---|---|

Reserved Instances & Commitment Discounts | Medium — procurement, forecasting and management | Upfront capital commitment; billing tools and capacity forecasts | 30–70% lower compute/storage costs for committed baseline | Stable production workloads post‑MVP or predictable steady-state services | High long-term savings; budget predictability; multi-service applicability |

Right‑Sizing Instances & Continuous Monitoring | Low–Medium — monitoring and iterative adjustments | Monitoring tools (CloudWatch, Datadog), 2–4 weeks baseline data | Typically 20–40% compute savings with minimal perf impact | Early-stage deployments correcting over-provisioning | Fast, data-driven savings; minimal architecture changes |

Auto‑Scaling & Dynamic Resource Allocation | Medium–High — policy tuning and testing | Autoscaling configs, metrics, load balancers, automation skills | Reduces idle costs and maintains performance under spikes | Variable-traffic APIs, event-driven services, e‑commerce | Pays for demand only; handles spikes automatically; aligns spend to usage |

Spot Instances & Preemptible VMs | Medium — requires fault-tolerant design and orchestration | Orchestration, retry logic, diversified instance pools | 70–90% cost reduction for interruptible workloads | Batch jobs, ML training, dev/test, non‑critical processing | Extreme cost savings; encourages resilient architectures |

Data Storage Optimization & Lifecycle Policies | Low–Medium — apply policies and some data engineering | Storage analytics, lifecycle rules, compression/dedup tools | 50–80% storage cost reduction for aging data | Backups, logs, archival data, data lakes | Automated tiering; immediate savings; compliance-friendly archival |

Network & Data Transfer Cost Optimization | Medium — architectural changes and CDN strategy | CDN, regional architecture, API/payload optimization | Often 30–50% reduction in egress bandwidth costs and lower latency | Video, mobile apps, global SaaS, high-bandwidth workloads | Lowers egress charges; improves latency and UX |

Container & Orchestration Efficiency (ECS/EKS) | High — orchestration tuning and capacity planning | Kubernetes/ECS expertise, autoscalers (Karpenter), cost tools | 30–70% infra reduction via bin packing and Spot integration | Microservices, multi‑tenant platforms, containerized ML workloads | Fine-grained utilization; fast container-level scaling; Spot benefits |

Database Optimization & Right‑Sizing | Medium–High — DB tuning, possible migrations | DB monitoring, query profiling, read replicas or serverless options | 20–50% DB cost reduction; improved query performance | Data-intensive apps, variable DB demand, high-read workloads | Eliminates idle DB costs (serverless); improves application performance |

Cloud Cost Monitoring, Allocation & Tagging Strategy | Low–Medium — governance and process adoption | Tagging schema, cost tools (Cost Explorer, Kubecost), training | Visibility enabling targeted savings and budget control | Multi-team organisations, Series A+ startups, finance reporting | Accountability, cost attribution, anomaly detection and forecasting |

Serverless Architecture for Event‑Driven & Batch Workloads | Low–Medium — refactor to functions and event triggers | Dev effort to refactor, observability, managed vendor services | Pay-per-execution; eliminates idle infra costs; automatic scaling | Event-driven APIs, webhooks, batch tasks, MVPs | Zero idle cost; rapid scaling; reduced operational overhead |

From Founder to Architect: Building a Cost-Efficient Future

The journey from a promising idea to a scalable, investor-ready product is paved with critical decisions. As we've explored, mastering cloud cost optimization best practices is not merely a technical task for your engineering team; it is a fundamental business strategy that directly impacts your runway, profitability, and ultimate valuation. This is not about penny-pinching. It's about engineering a capital-efficient foundation that demonstrates operational maturity to investors and customers alike.

We’ve moved beyond abstract theory into actionable tactics: from securing long-term savings with Reserved Instances to achieving granular efficiency through right-sizing databases and implementing container orchestration. Each practice, whether it’s deploying Spot Instances for batch processing or designing serverless architectures for event-driven tasks, serves a dual purpose. It reduces immediate operational expenditure while simultaneously building a resilient, scalable technical asset. A well-architected cloud environment, governed by strong tagging and monitoring, is a signal that you are building a business, not just a prototype.

Synthesizing Strategy into Action

The key takeaway is that cloud cost management is an ongoing discipline, not a one-time fix. It requires a cultural shift where financial awareness is embedded directly into the development lifecycle.

Proactive vs. Reactive: Don't wait for the end-of-month bill to cause alarm. Implement continuous monitoring, set intelligent alerts, and use detailed tagging from day one to maintain visibility.

Architecture is Strategy: Your architectural choices have direct financial consequences. Adopting patterns like auto-scaling and serverless isn't just about performance; it’s a deliberate move toward a pay-for-value model that aligns costs directly with usage and demand.

Commitment with Confidence: Tools like AWS Savings Plans and Azure Reserved Instances offer significant discounts, but they require foresight. Your ability to confidently make these commitments stems from a deep understanding of your workload patterns, a skill developed through diligent monitoring and analysis.

For founders and architects focused on building a cost-efficient future, consulting a comprehensive CTO's Guide to Cloud Cost Optimization for Startups can provide invaluable strategies to deepen this understanding and refine your approach. This discipline transforms your infrastructure from a simple cost center into a source of competitive advantage.

Ultimately, these cloud cost optimization best practices are about building a technical moat around your business. An efficient, secure, and scalable infrastructure is incredibly difficult for competitors to replicate. It shows investors that you are a responsible steward of their capital and are prepared for the rigors of growth. It proves you understand that elite engineering is about creating business longevity, not just shipping features. This is the mark of a founder who is also an architect, building not just for today, but for a high-valuation future.

Ready to build an MVP that’s engineered for scale and passes VC due diligence from day one? At Buttercloud, we act as your boutique engineering partner, embedding these cost-optimization principles into your product’s DNA. Contact Buttercloud to transform your idea into an audit-ready, high-valuation technical asset.