HIPAA-Compliant Software Development: A Founder's Guide

You’re probably in one of two situations right now.

You’ve got a strong HealthTech idea and you’re trying to get an MVP into users’ hands without building a regulatory disaster. Or you already have a product, someone finally asked a hard security question, and now you’re realizing HIPAA isn’t a box you check at the end.

That’s the wrong frame.

HIPAA-compliant software development isn’t paperwork layered on top of a startup. It’s architecture, process, and operating discipline that make your company more investable. If your product touches protected health information, compliance work isn’t overhead. It’s part of the asset you’re building.

From HealthTech Idea to Investable Asset

You ship an MVP, sign a pilot, and get the investor follow-up you wanted. Then diligence starts. They ask where ePHI is stored, which vendors touch it, who has production access, whether audit logs are immutable, and what happens if a laptop with patient data is lost. If your team cannot answer in detail, the product stops looking like an asset and starts looking like a liability.

That is the true cost of treating HIPAA as cleanup work.

The Office for Civil Rights can impose civil monetary penalties that reach $2,134,831 per violation category, per year, as outlined in the HHS HIPAA Enforcement Rule summary. Founders fixate on fines because they are visible. Discerning investors focus on something else first. Remediation cost, delayed enterprise sales, weaker diligence outcomes, and a smaller valuation multiple.

If you handle ePHI, architecture decisions affect company value from day one. Data flows, access design, logging, vendor selection, and deployment discipline all show up later in procurement reviews, security questionnaires, and financing conversations.

Why investors care early

In HealthTech, weak security posture signals weak execution. It tells investors your team may win attention but struggle to survive diligence, pass procurement, or scale without expensive rework.

Good HIPAA development speeds the company up. It forces clear system boundaries, defined ownership, cleaner infrastructure, and fewer improvisations in production. That reduces engineering churn and protects roadmap velocity when your first serious customer asks hard questions.

Practical rule: If compliance is absent from the MVP definition, the MVP is incomplete.

Compliance builds the moat

Treat HIPAA as product infrastructure with financial upside. A company that can prove disciplined handling of ePHI is easier to insure, easier to sell into enterprise healthcare, and easier to defend in diligence. That raises confidence in the business, not just the codebase.

This matters even more in categories close to regulated workflows, including diagnostics, remote monitoring, patient communications, care coordination, and billing. Teams operating near those constraints often gain useful perspective from adjacent work on accelerating medtech success, because the same rule applies. Build for scrutiny early, or pay for it later.

Here is the shift founders need to make:

Weak framing | Strong framing |

|---|---|

“How little HIPAA work can we get away with?” | “What technical choices reduce diligence risk and increase company value?” |

“We’ll clean it up after traction.” | “We’ll design it so growth does not create security debt.” |

“Compliance is legal’s problem.” | “Compliance is an engineering, product, and operations discipline.” |

The best HealthTech founders do not bolt compliance onto a demo. They build a company that can withstand review, close larger deals, and command a better valuation because the technical foundation already supports trust.

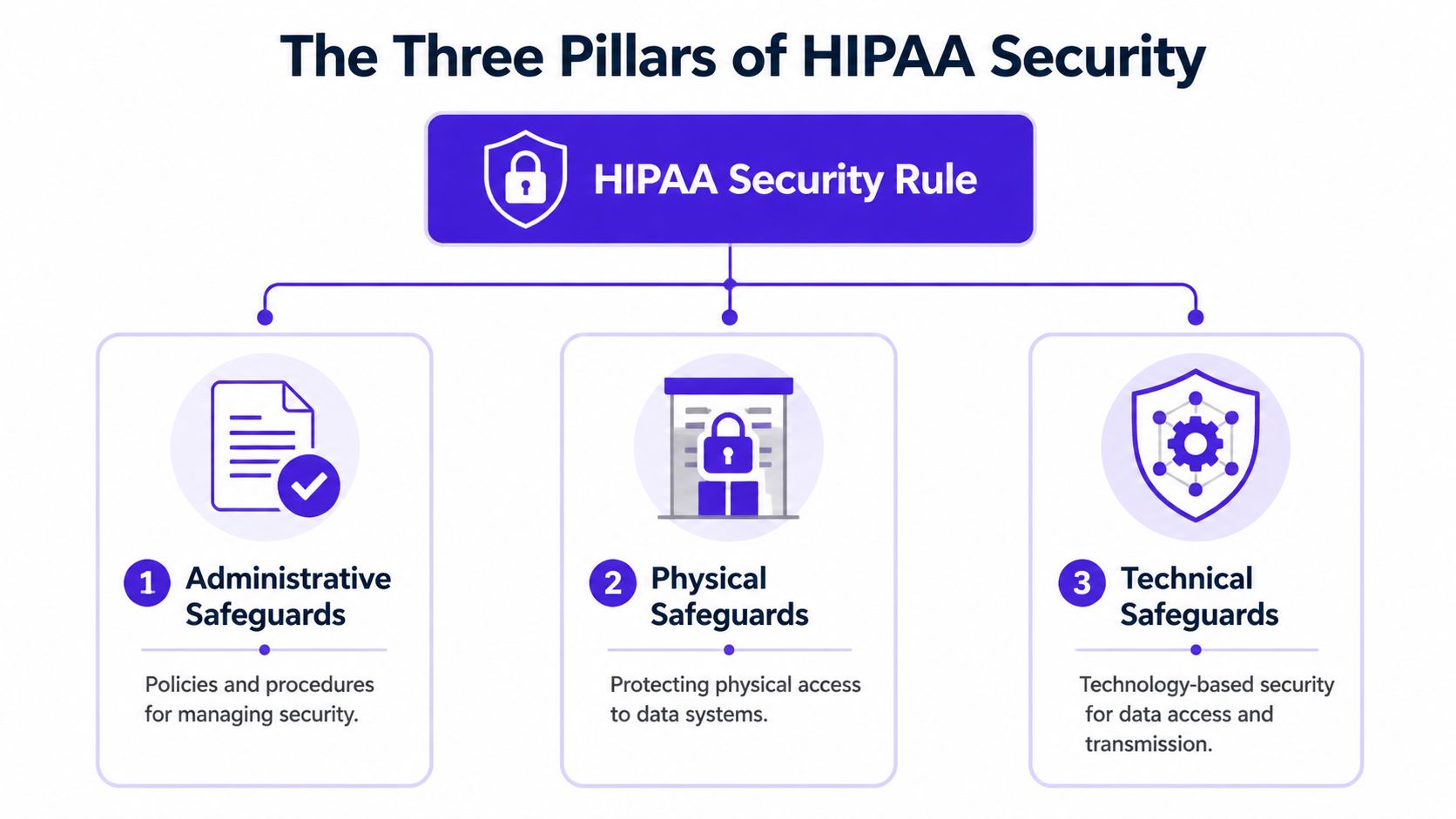

The Three Pillars of HIPAA Security

HIPAA feels abstract until you simplify it into its operating model. Think of it as three layers protecting the same system: administrative safeguards, physical safeguards, and technical safeguards.

If one layer is weak, the others carry more pressure than they should.

Administrative safeguards

This is the policy and decision layer. It answers who owns security, how risk gets assessed, how people are trained, and what happens when something goes wrong.

For a startup, this means you need operating discipline even if your team is small. Someone has to own risk assessment. Someone has to define access approval rules. Someone has to maintain incident procedures and vendor oversight. If nobody owns those functions, the company owns the failure.

Administrative safeguards usually include:

Risk analysis: You need a living view of where ePHI enters, where it moves, who can touch it, and what can fail.

Workforce training: Engineers, contractors, support staff, and founders all need clear rules for handling PHI.

Policy enforcement: Access, change management, authentication, logging, and breach response need written expectations.

Physical safeguards

A lot of founders underestimate this category because they assume cloud infrastructure makes it irrelevant. It doesn’t.

Physical safeguards still matter because laptops, backup media, office environments, and remote work setups can all expose sensitive data. If your team works from coffee shops on unsecured devices, you have a physical problem even if your app runs in a hardened cloud environment.

A practical founder checklist looks like this:

Device controls: Company-managed laptops, encrypted drives, screen locks, and controlled local storage.

Access boundaries: Restrict who can physically access systems, backup hardware, and admin workstations.

Remote work hygiene: Treat home offices, coworking spaces, and travel as part of your security perimeter.

The easiest way to fail compliance is to think only about software while your team handles sensitive access on unmanaged devices.

Technical safeguards

Most startup teams initially concentrate their efforts here, which is understandable. It encompasses the controls built directly into your application and infrastructure.

A founder should expect these baseline capabilities:

Safeguard | What it does |

|---|---|

Access control | Limits who can view or modify ePHI |

Audit controls | Records what happened, who did it, and when |

Integrity protections | Helps prevent improper alteration or destruction of data |

Authentication | Confirms the user is who they claim to be |

Transmission security | Protects data while it moves across networks |

The mistake is treating these pillars as separate departments. In strong hipaa-compliant software development, they reinforce each other. Policy drives implementation. Devices support operational discipline. Technical controls generate the evidence that your policies are effective.

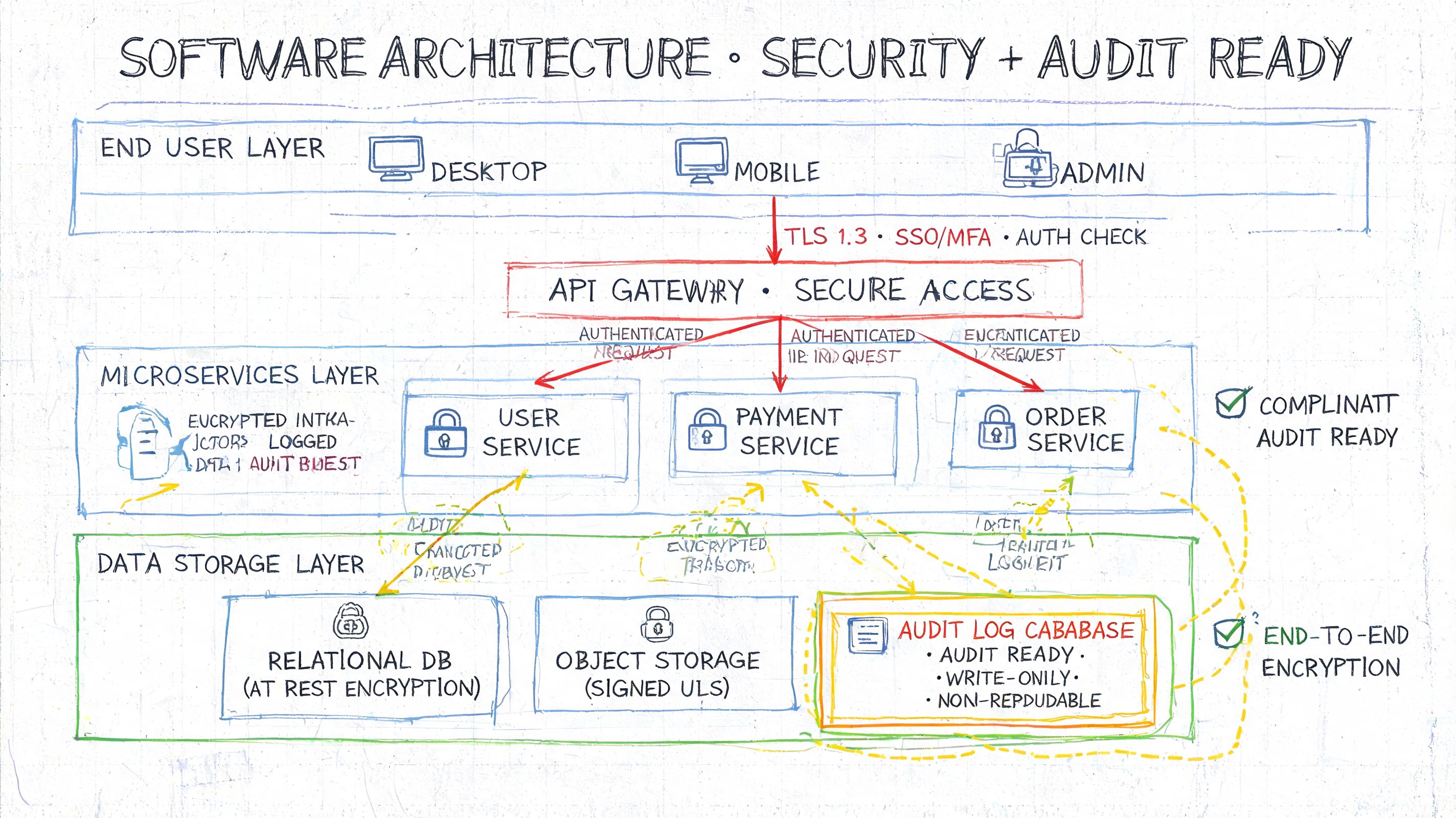

Designing an Audit-Ready Software Architecture

An investor asks a simple question during diligence. "Show me where patient data enters your system, who can touch it, and how you would prove that access was appropriate six months ago." If your team answers with a whiteboard sketch and good intentions, your product looks fragile. If you answer with clear boundaries, enforced controls, and evidence by design, your software starts to look like an asset worth paying a premium for.

You build that outcome at the architecture level. Audit-ready HIPAA development is not a security add-on. It is a product design choice that protects revenue, lowers diligence risk, and creates a technical moat that weaker competitors will struggle to copy.

Start with the trust boundaries

Your architecture should make four things obvious. Where ePHI enters, where it is stored, which services are allowed to process it, and which actions must always leave an audit trail.

Founders should be able to answer these questions immediately:

Where does ePHI enter the system?

Which services can read it?

Which users can create, update, or export it?

How is tenant separation enforced?

What leaves the protected data boundary, and for what business reason?

If those answers live in tribal knowledge, your company has a diligence problem.

The right pattern for an early-stage HealthTech product is a controlled core system with narrow, explicit interfaces. Put authentication in front of every protected workflow. Enforce authorization at the API and resource level, not just in the frontend. Write immutable logs for sensitive actions. Treat backups, replicas, and disaster recovery copies with the same controls as production. HHS guidance on the HIPAA Security Rule safeguards supports this model by focusing on access control, audit controls, integrity, authentication, and transmission security as system requirements, not optional extras.

Multi-tenant SaaS needs deliberate isolation

Multi-tenant design is usually the right business decision for a startup. It keeps costs under control and speeds up product iteration. It also creates one of the easiest ways to destroy trust if you implement it carelessly.

Tenant isolation has to exist in the database, the application layer, background processing, analytics pipelines, caches, search indexes, admin tools, and support workflows. Teams often secure the main tables and forget the side channels. That is where avoidable exposure happens.

A clean architecture usually includes:

Tenant-aware data models: Every read and write path enforces tenant scope by default.

Fine-grained authorization: Use RBAC or attribute-based rules so clinicians, patients, internal ops staff, and support personnel see only the records tied to their role and context.

Separated privileged workflows: Admin actions need stronger authentication, tighter approval paths, and more detailed logging than standard user activity.

Scoped observability: Logs, traces, dashboards, and alert payloads should never become a shadow copy of ePHI.

If you plan to scale with distributed services, this article on microservices on Kubernetes for regulated workloads is a useful reference. The business rule is simple. Every new service that touches ePHI increases your compliance surface area, so service boundaries must be justified, documented, and enforced.

AI changes your risk model

AI features raise product value. They also raise the cost of sloppy architecture.

The mistake is treating AI as a thin feature on top of your existing app. It is a separate risk domain with its own storage paths, data lineage questions, and audit requirements. Prompts, outputs, embeddings, training sets, retrieval indexes, and fine-tuning artifacts can all become compliance surfaces if they contain or derive from ePHI.

One AI-focused HIPAA guide notes that enforcement trends in 2025 included fines exceeding $10 million tied to inadequate de-identification practices in machine learning contexts, which is why model-level controls now deserve board-level attention in this AI-focused HIPAA guide.

Set hard rules early:

Do not train models casually on production data.

Separate inference services from raw PHI stores.

Isolate retrieval layers for RAG systems and log data lineage.

Treat prompts, model outputs, embeddings, and evaluation datasets as governed assets.

Require architecture review before any team introduces third-party AI APIs into clinical or patient-facing workflows.

Build your AI layer so you can explain where every token came from, why it was allowed into the system, and how it can be deleted or traced later.

That is what audit-ready architecture means in practice. It protects data, shortens due diligence, and turns compliance work into a valuation advantage instead of a tax on growth.

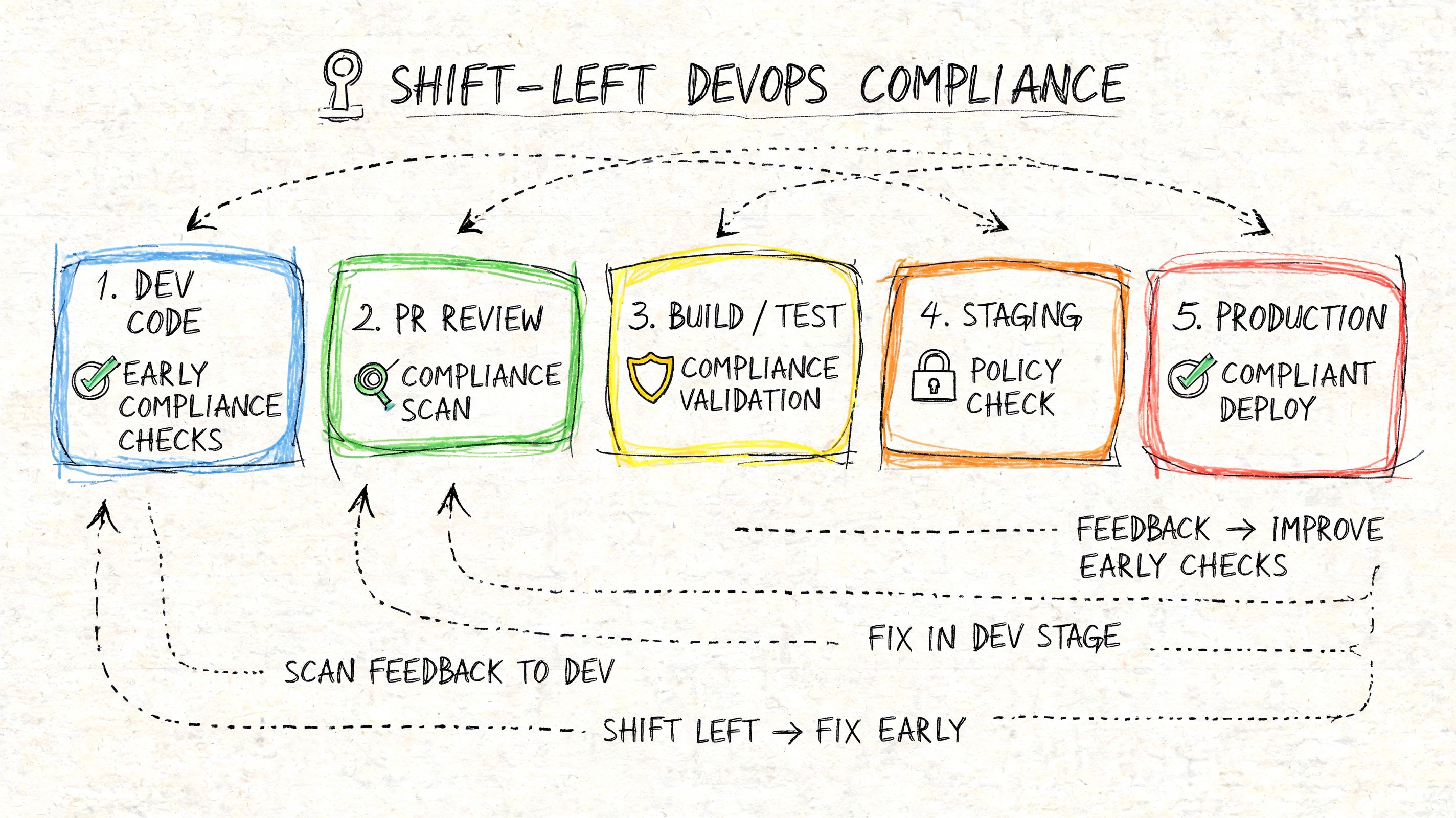

Embedding Compliance in Your DevOps Pipeline

If compliance enters the process at release time, you already lost. You’ve delayed risk discovery, accumulated bad assumptions, and made every fix more expensive.

The only sane approach is shift-left security inside your engineering workflow.

A verified source states that integrating HIPAA requirements into a shift-left Secure SDLC reduces the likelihood of a breach by 65% compared with retrofitting controls later, and retrofitting can cost up to 10 times more to remediate, as described in this shift-left HIPAA SDLC guide.

What shift-left actually means

It doesn’t mean “care about security earlier” as a slogan. It means putting concrete checks into sprint planning, code review, CI, and deployment workflows.

A founder should expect these controls to exist before launch:

Requirements with compliance intent

User stories should define how ePHI is collected, minimized, stored, transmitted, and audited.Threat modeling during design

Teams should review risky flows before implementation, not after incidents.Automated code and dependency checks

SAST tools like SonarQube, SCA tools like Snyk, and infrastructure scanning tools like Checkov should run in CI.Release gates

Builds with serious security findings shouldn’t move forward because a deadline got tight.Artifact retention

Scan results, approvals, and deployment logs should be preserved for future audits and investor diligence.

Automation is how startups stay fast

Founders sometimes hear this and worry they’re signing up for heavy process. They’re not. Good automation removes manual chaos.

The alternative is much worse. Engineers stop trusting releases. Security reviews become ad hoc. Founders get surprised by diligence questions. Teams spend nights reconstructing evidence that should have existed automatically.

Use your CI/CD system as a control plane, not just a deployment tool. This overview of CI/CD DevOps practices is useful if you need to align release speed with policy enforcement.

A practical pipeline for hipaa-compliant software development should check code, containers, dependencies, infrastructure definitions, and deployment approvals in one traceable path. That gives you consistency. It also gives you evidence.

If your compliance proof depends on tribal knowledge, you don’t have compliance. You have optimism.

What founders should demand from the team

Don’t ask whether the team “takes security seriously.” Ask for operating proof.

Show the CI checks: What runs on every pull request and every release.

Show the fail criteria: Which findings block deployment.

Show the logs: Where scan history, approvals, and environment changes are retained.

Show the ownership model: Who reviews exceptions and who signs off.

That discipline doesn’t slow product teams down. It keeps the codebase investable.

Managing Vendor Risk and BAAs

A signed BAA is necessary. It is not sufficient.

Founders get into trouble when they assume the contract itself transfers the risk. It doesn’t. If a vendor touches PHI, your company still owns the decision to use that vendor, the way they’re integrated, and the oversight around that relationship.

One verified source states that 65% of 2025 HIPAA violations stemmed from vendor mismanagement, not direct breaches by the covered entity, according to this vendor oversight analysis. That should change how you think about third-party tooling.

The hidden chain of trust

Your risk surface isn’t just your cloud provider. It includes every analytics tool, support platform, communications API, AI service, subcontractor, and fractional development partner that can access systems or data.

The common founder mistake is using a vendor because they say they are “HIPAA-ready” or “HIPAA-eligible.” Those phrases don’t protect you. Configuration matters. Access pathways matter. Data flow matters. Subprocessors matter.

Use this lens when reviewing vendors:

Vendor question | Why it matters |

|---|---|

Will they create, receive, maintain, or transmit PHI | Determines whether a BAA is required |

Do they rely on subprocessors | Extends your risk chain |

Can your team restrict and audit access | Contracts are useless without operational controls |

Can PHI be excluded or minimized | Less exposure is always better |

What happens on termination | Data return and destruction must be clear |

Don’t stop at contract review

A BAA should be the start of vendor governance, not the finish line.

Founders should put these controls in place:

Inventory every PHI-touching vendor: If it’s not in the inventory, it can’t be governed.

Map data flow by vendor: Know exactly what data each service receives and why.

Review access paths: Check support accounts, admin panels, exported reports, and backup handling.

Reassess after product changes: A harmless integration can become dangerous when a new feature pushes PHI through it.

Control subcontractors: If outside developers, consultants, or support partners can access production, treat them as part of the compliance scope.

If you’re evaluating external healthcare data integrations, OMOPHub's free medical API guide is a useful starting point for seeing how broad the API spectrum can get. The key is simple: every integration expands the trust boundary, whether it looks small or not.

The rule founders should remember

Vendor risk is architecture risk with paperwork attached.

That means legal review alone won’t save you. Your engineering team has to enforce least privilege, isolate sensitive paths, and keep logs that prove who accessed what. If that control plane is weak, the BAA becomes a false sense of safety.

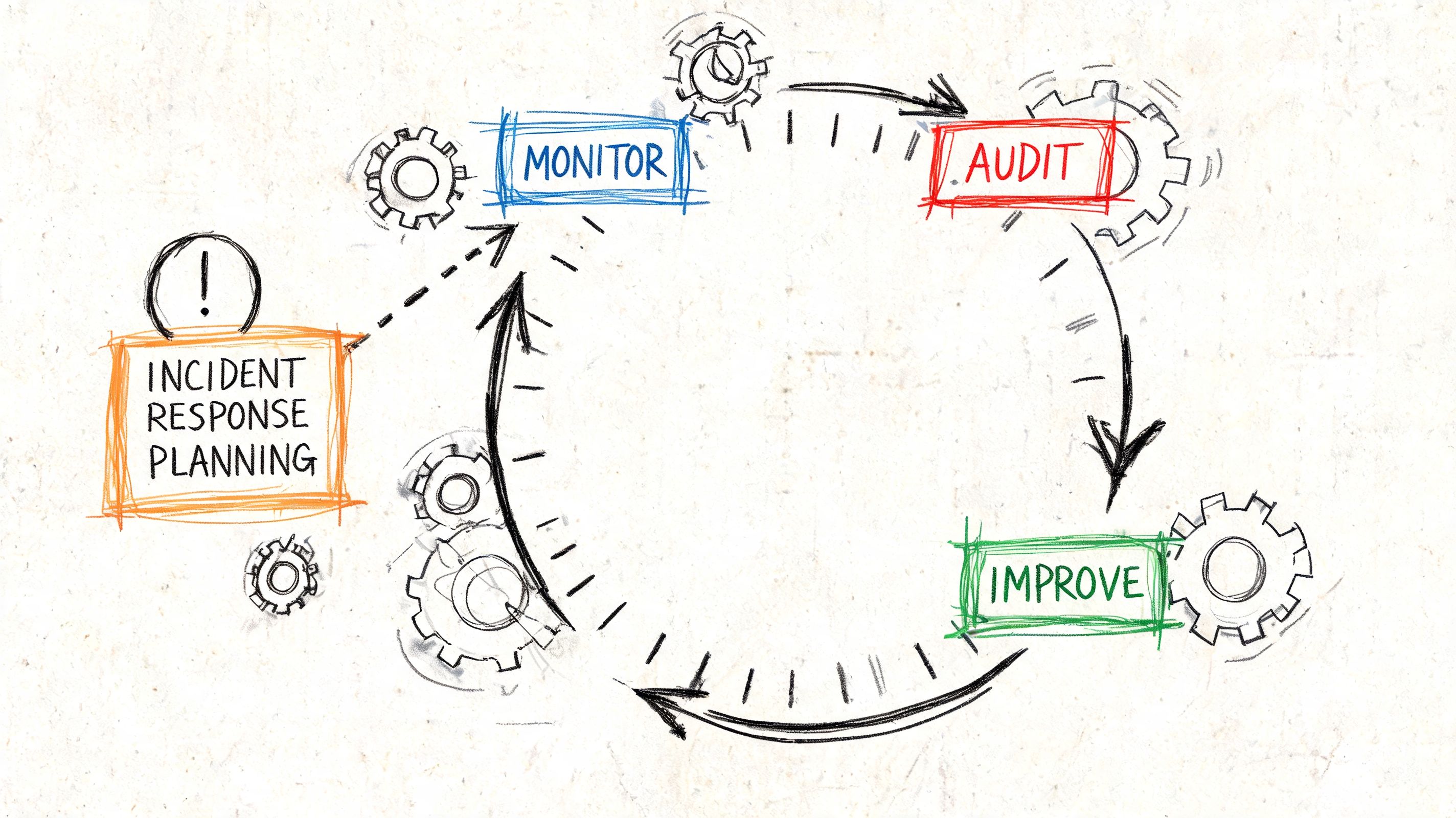

Continuous Auditing and Incident Response Planning

A secure launch means very little if your system drifts, your logs are useless, and your team freezes during an incident.

Mature hipaa-compliant software development requires ongoing validation. Not annual panic. Not one penetration test before a customer demo. Ongoing operational proof.

Penetration testing and logging are non-negotiable

A verified source notes that penetration testing can reveal up to 92% of vulnerabilities missed by automated scans alone, and that 70% of data breaches stem from misconfigured access controls, which effective logging and auditing are designed to detect, according to this HIPAA security testing reference.

That matters because automated scanning and real-world exploitation aren’t the same thing. Scanners are useful. Skilled testers still find logic flaws, privilege escalation paths, insecure workflows, and abuse cases that tools miss.

Your operating model should include:

Regular penetration testing: Cover apps, APIs, admin surfaces, and infrastructure.

Thorough audit logging: Record access attempts, administrative actions, sensitive reads, updates, and auth failures.

Tamper-resistant storage: Logs should be hard to alter and easy to retrieve.

Alerting for meaningful events: Failed MFA attempts, unusual access patterns, and privilege changes should trigger review.

What a usable incident response plan looks like

Most startup incident response documents are theater. They contain generic severity labels and nobody knows who is responsible for what.

A workable plan is shorter and more specific. It should answer:

Who declares an incident

Who has authority to contain systems

How evidence gets preserved

How stakeholders are notified

How root cause analysis gets documented

How corrective actions are tracked

Your first incident is the wrong time to discover nobody owns decision-making.

The plan also needs practice. Tabletop exercises matter because they expose operational confusion before a real breach does. Founders don’t need a giant bureaucracy here. They need clarity.

Auditing is also investor proof

Audit trails aren’t just for regulators. They tell investors your team can run a sensitive platform responsibly.

Good logs answer concrete questions fast. Who accessed the record. What changed. Which service initiated the action. Whether the access fit the user’s role. Whether the event correlated with other anomalies.

If you want a practical outside reference for evaluating ongoing controls, this patient data protection guide offers a useful checklist mindset. The strongest teams treat auditing as a product capability, not a compliance appendix.

Packaging Your Compliance for Investors

A secure system without documentation still creates friction in diligence.

Investors, enterprise buyers, and security reviewers need evidence they can inspect. If your team has done the hard engineering work but can’t package the proof, you’ll look less prepared than you are.

The diligence packet you should already have

Founders should maintain a clean compliance packet that includes:

Risk assessment records: Current risk analysis, identified issues, and remediation status.

Security policies and procedures: Access control, incident response, change management, logging, and vendor management policies.

Architecture documentation: Data flow diagrams, service boundaries, tenant isolation approach, and encryption design.

Training records: Proof that employees and contractors received security and PHI handling guidance.

Vendor and BAA register: A complete list of relevant vendors, their role, contract status, and review dates.

Testing evidence: Pen test summaries, scan results, remediation notes, and release controls.

Audit log retention approach: Where logs live, who can access them, and how integrity is preserved.

Why this affects valuation

Documentation reduces uncertainty. Uncertainty lowers valuation because it creates questions about hidden cost, execution quality, and future risk.

A well-organized diligence packet does the opposite. It tells investors the company understands regulated operations, manages technical debt deliberately, and won’t need a chaotic rebuild right after funding.

Use a simple rule. If a serious investor asks how your platform protects PHI, you should be able to answer with architecture, process, and evidence in the same conversation. Not promises. Not “we’re working on that.” Evidence.

HIPAA Development Frequently Asked Questions

Is there a formal HIPAA certification for software

No universal HIPAA certification makes a product automatically compliant. HIPAA is about whether your organization, software, infrastructure, vendors, and operating practices meet the required safeguards.

Are HIPAA-eligible cloud services automatically compliant

No. “HIPAA-eligible” means a service may be used in a compliant setup. Your team still has to configure it correctly, control access, manage logging, and govern data flow.

Does de-identified data remove all risk

Not automatically. Founders need to be careful here, especially with AI systems. If data can be re-linked, mixed with other datasets, or used in workflows that reintroduce identity risk, you still have a serious exposure problem.

Is a BAA enough to approve a vendor

No. A BAA is required when applicable, but vendor review also needs architectural scrutiny, access control review, and ongoing oversight.

Can we retrofit compliance after launch

You can. It’s just a bad business decision in most cases. Retrofitting usually means heavier engineering cost, slower enterprise sales, more diligence friction, and a weaker technical moat.

If you’re building a HealthTech product and want senior-level help turning compliance into an investor-ready asset, Buttercloud helps founders go from idea to production-grade MVP with the architecture, DevOps discipline, and technical leadership needed to build secure, scalable products that hold up in due diligence.